| Workflow Orchestration | Provides a flexible and portable orchestration layer for ML workflows across various environments | Offers robust orchestration within the Databricks ecosystem, optimized for Spark-based workflows |

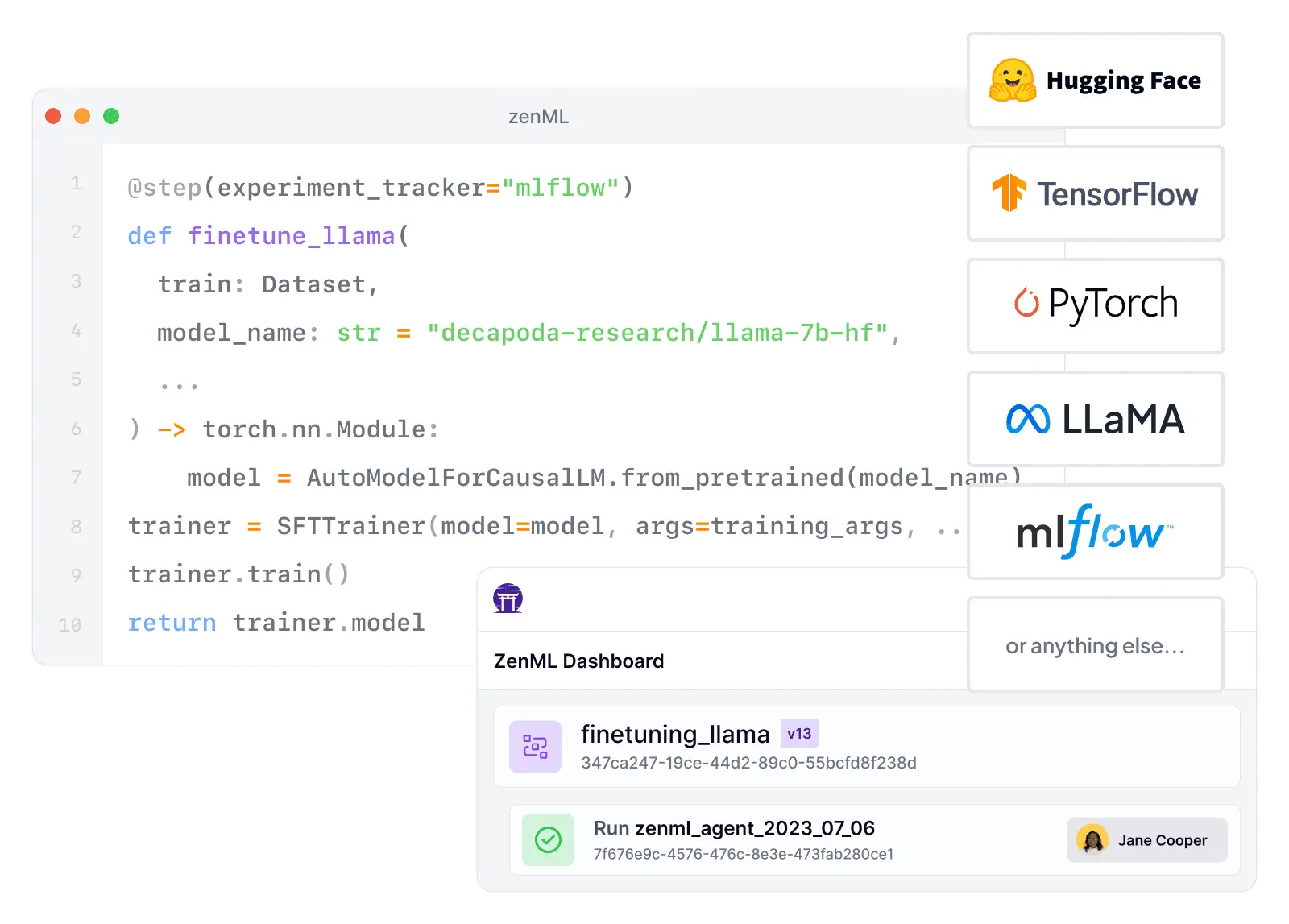

| Integration Flexibility | Seamlessly integrates with a wide range of MLOps tools and cloud services | Primarily focuses on integration within the Databricks ecosystem and select partner tools |

| Vendor Lock-In | Enables easy migration between different tools and cloud providers | Tightly coupled with Databricks' ecosystem, which may lead to vendor lock-in |

| Setup Complexity | Lightweight setup with minimal infrastructure requirements | More complex setup, often requiring dedicated Databricks clusters and workspace configuration |

| Learning Curve | Gentle learning curve with familiar Python-based pipeline definitions | Steeper learning curve, especially for teams new to Spark and the Databricks ecosystem |

| Scalability | Scalable architecture that can grow with your needs, leveraging various compute backends | Highly scalable, particularly for big data processing with built-in Spark capabilities |

| Cost Model | Open-source core with optional paid features, allowing for cost-effective scaling | Subscription-based pricing model, which can be costly for smaller teams or projects |

| Data Processing | Flexible data processing capabilities, integrating with various data tools and frameworks | Optimized for big data processing with native Apache Spark integration |

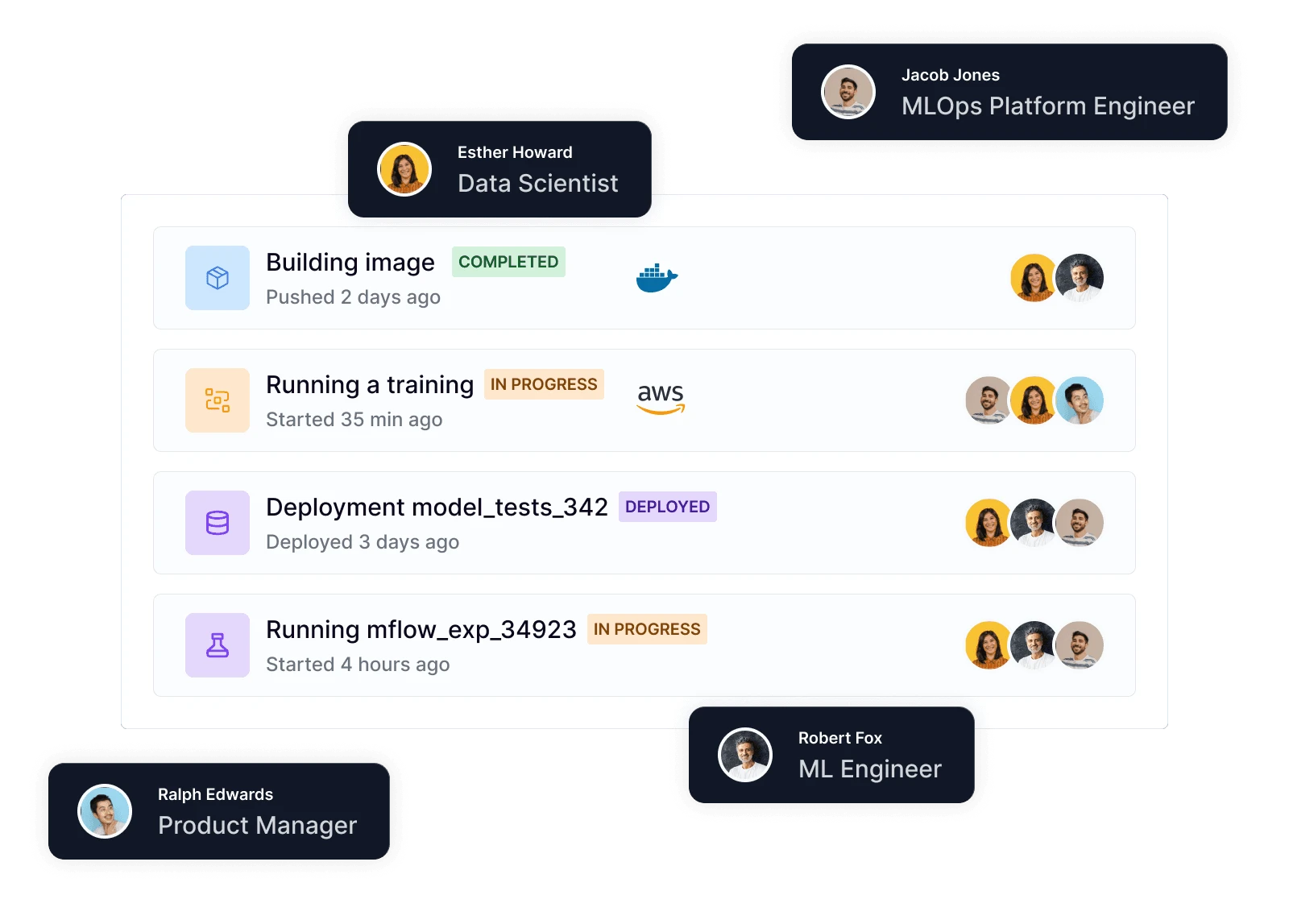

| Collaborative Development | Supports collaboration through version control and pipeline sharing | Offers collaborative notebooks and workspace management for team development |

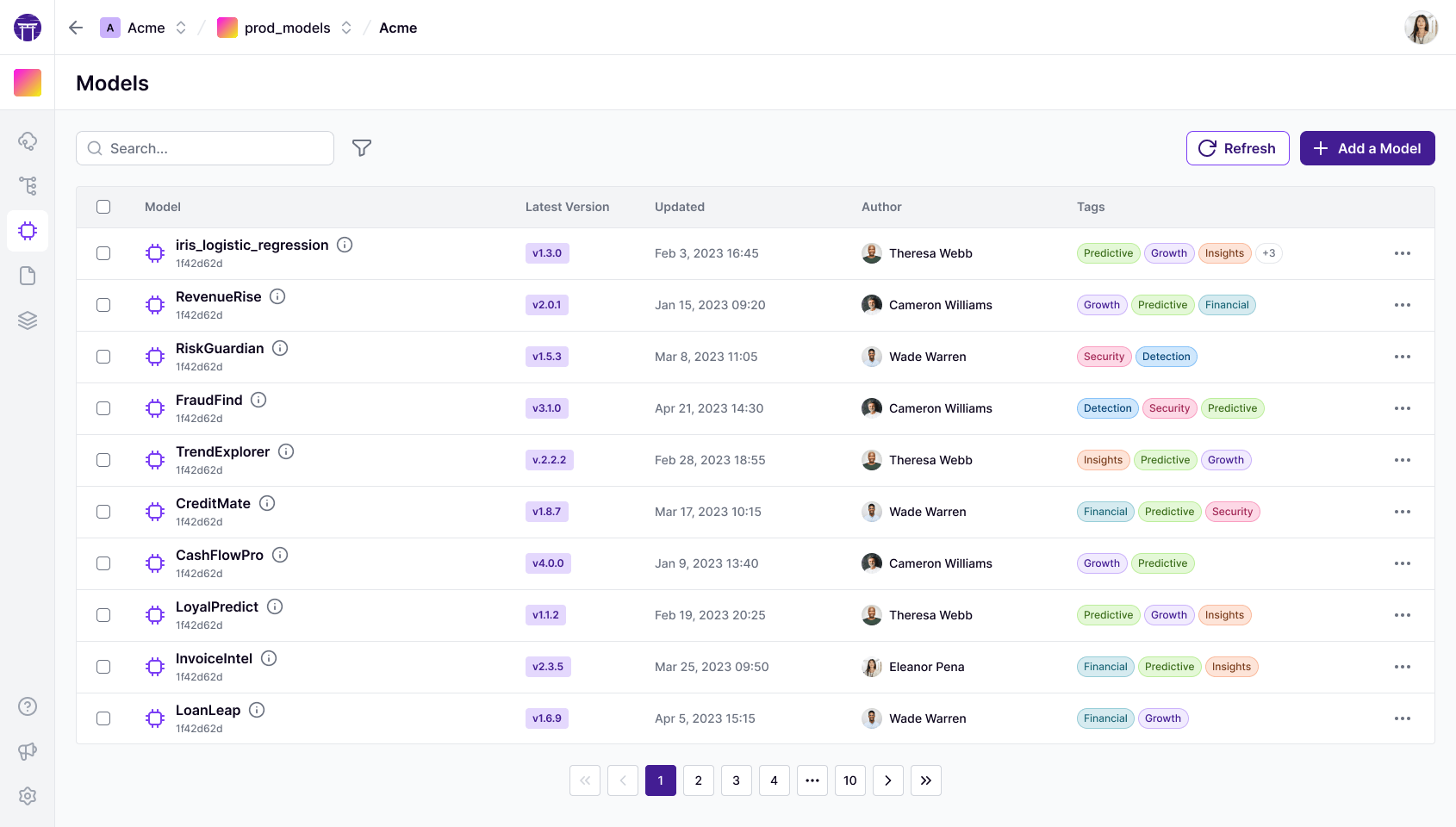

| ML Framework Support | Supports a wide range of ML frameworks and libraries | Supports popular ML frameworks, with optimizations for distributed training on Spark |

| Feature Store Integration | Integrates with feature stores like Feast, and orchestrates feature engineering pipelines as part of your ML workflow | Provides a built-in Feature Store within Unity Catalog for feature discovery, lineage, and online/offline serving |

| Model Monitoring & Drift Detection | Integrates with monitoring tools like Evidently and Great Expectations, orchestrated as pipeline steps for drift detection and data quality | Offers inference-table-driven monitoring with built-in data profiling and drift metrics via Lakehouse Monitoring |

| Governance & Access Control | Provides RBAC, artifact lineage tracking, and a model control plane for approval workflows and audit trails | Delivers fine-grained access control, auditing, and lineage through Unity Catalog across data and ML assets |

| Auto Retraining Triggers | Supports scheduled pipelines and event-driven triggers that can initiate retraining based on drift detection or performance thresholds | Enables auto-retraining via Databricks Jobs with scheduling, triggers, and integration with monitoring alerts |