Flexibility

Freedom to Choose, Power to Switch

One framework for all your MLOps and LLMOps needs, with the flexibility to change as you grow

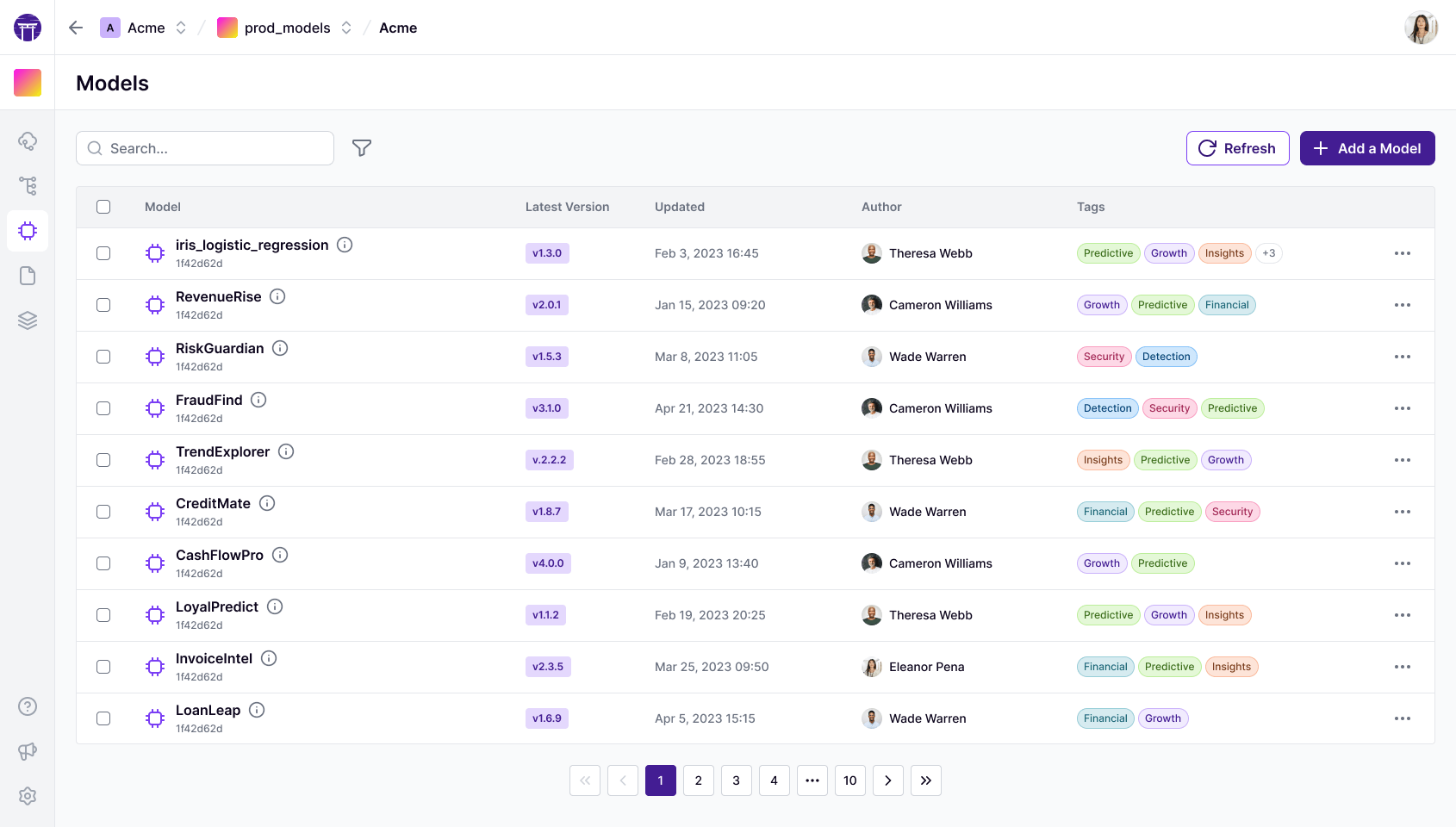

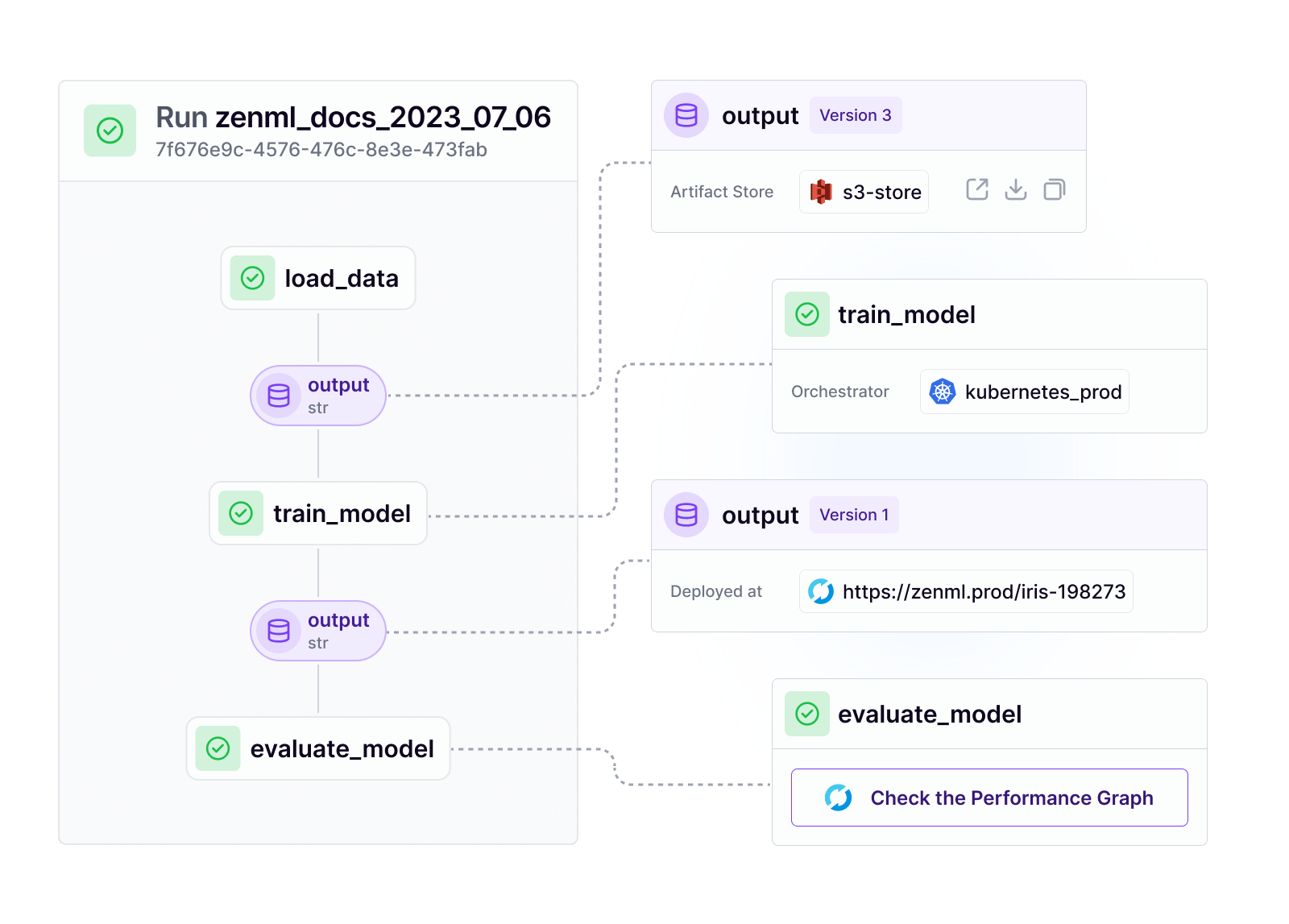

Seamless Backend Interoperability

Liberate your ML pipelines from infrastructure constraints.

- Effortlessly switch between orchestration engines and artifact stores.

- Multi-cloud support ensures true vendor independence.

- Maintain consistent workflows across diverse environments.

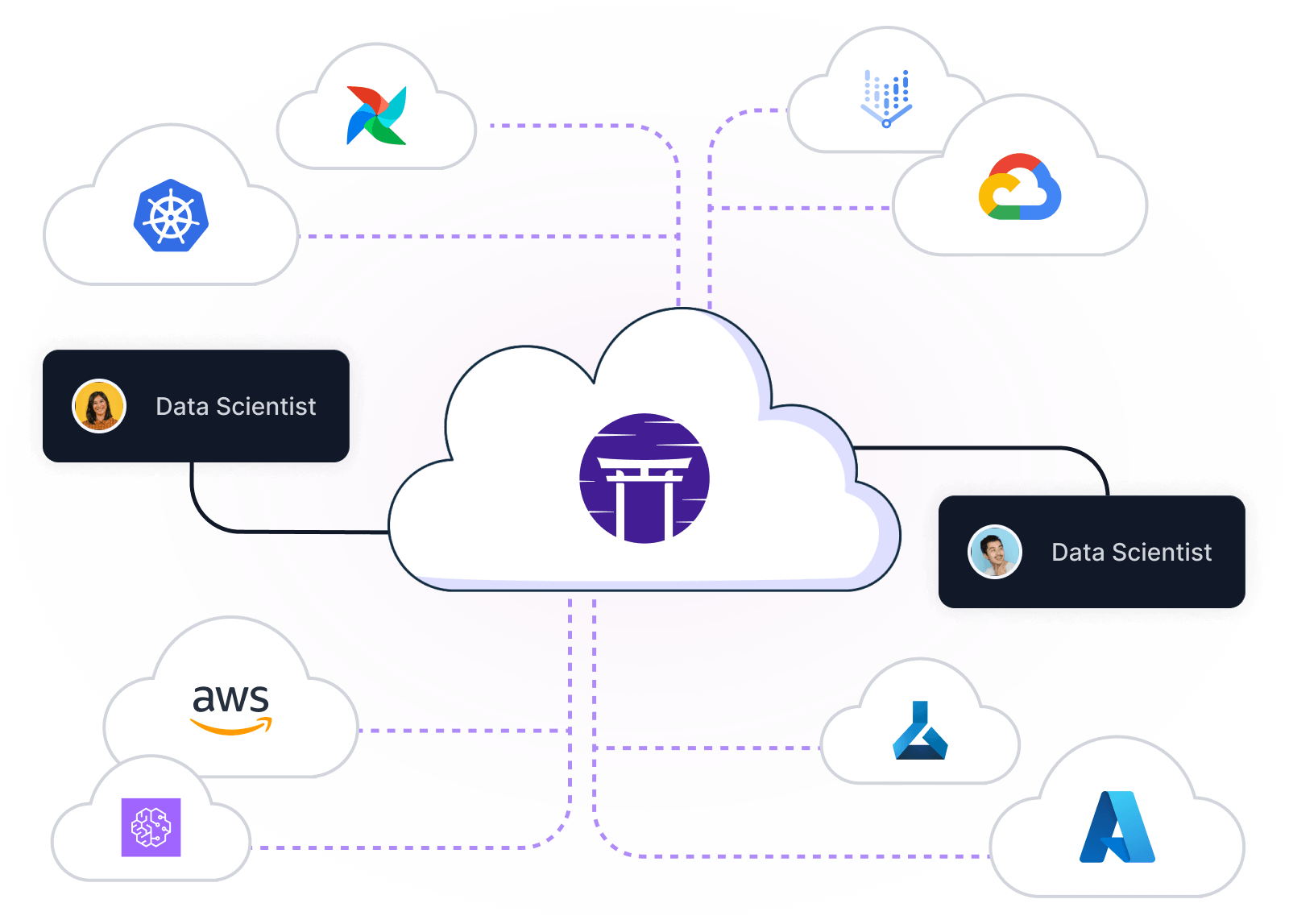

Unified ML and GenAI framework

Optimize resource utilization across traditional ML and generative AI workloads.

- Access cost-effective and readily available GPUs without code modifications.

- Seamlessly transition between CPU and GPU environments.

- Leverage unified abstractions for diverse ML and GenAI tasks.

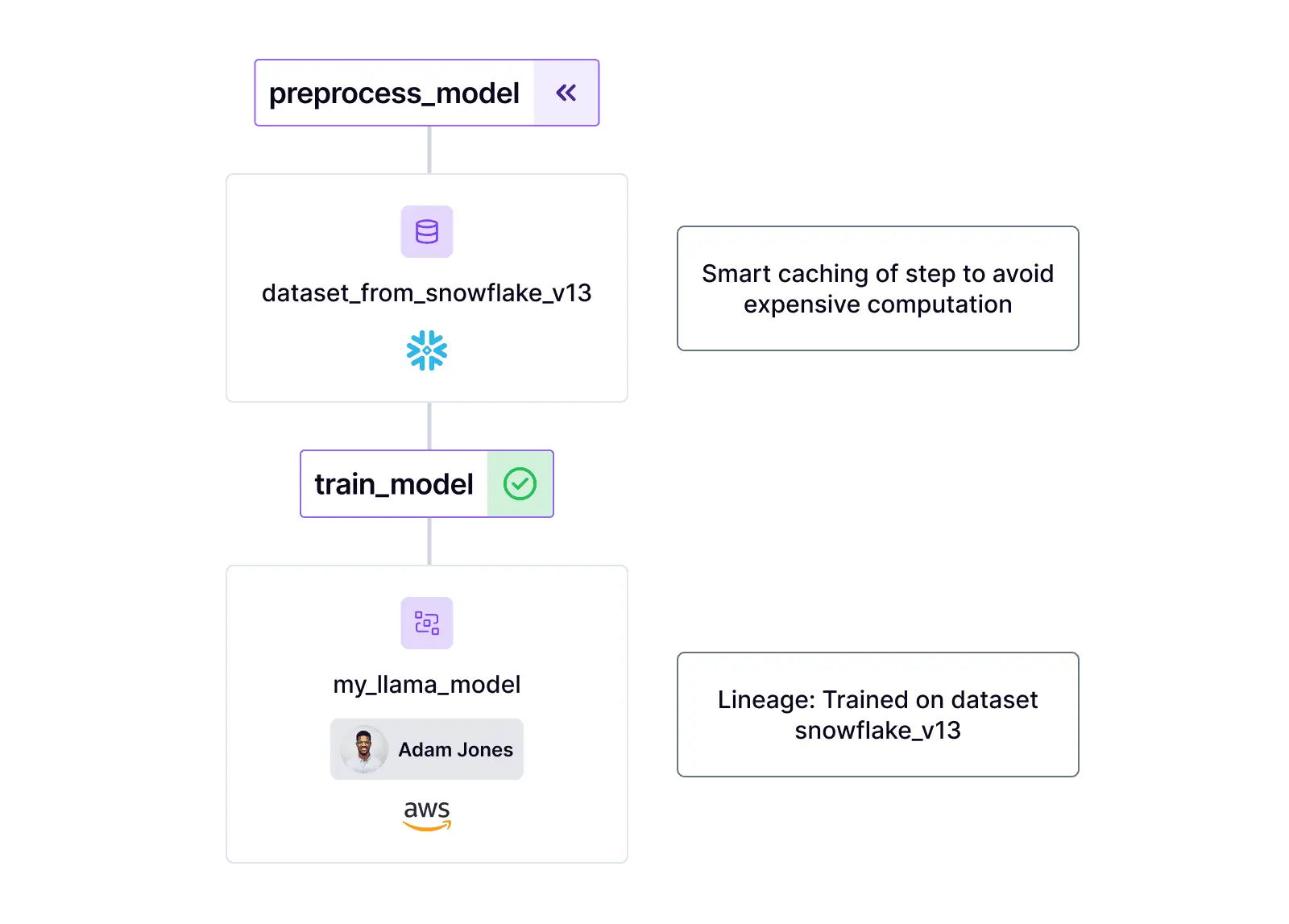

Customizable LLMOps Stack

Tailor your large language model operations to your specific requirements.

- Automate updating and testing of your RAG applications.

- Flexible integrations with leading fine-tuning frameworks, Hugging Face Accelerate, Axolotl, PyTorch Lightning and more.

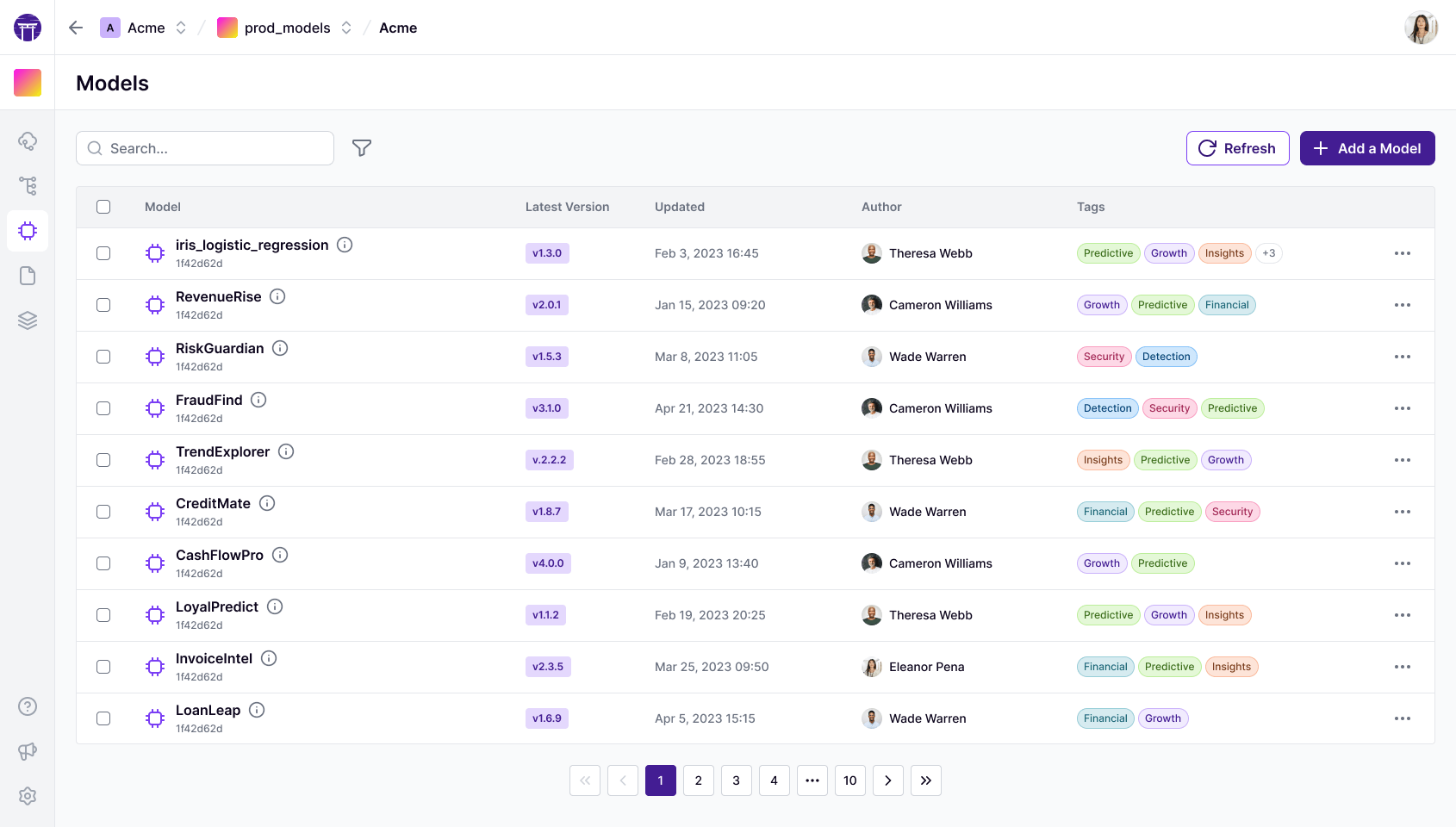

- Get a central picture of all LLM models with prompts, metrics, and more.

With ZenML, we're no longer tied to a single cloud provider. The flexibility to switch backends between AWS and GCP has been a game-changer for our team.

Dragos Ciupureanu

Koble.ai

Unify Your ML and LLM Workflows

- Free, powerful MLOps open source foundation

- Works with any infrastructure

- Upgrade to managed Pro features