On this page

Breaking Down Silos: MLOps Challenges in Emerging Markets

In recent years, the adoption of machine learning operations (MLOps) has become a global phenomenon, extending far beyond traditional tech hubs. As organizations worldwide embrace AI/ML initiatives, they face unique challenges in implementing robust MLOps practices, particularly in emerging markets where cloud adoption patterns and infrastructure requirements differ significantly from Western markets.

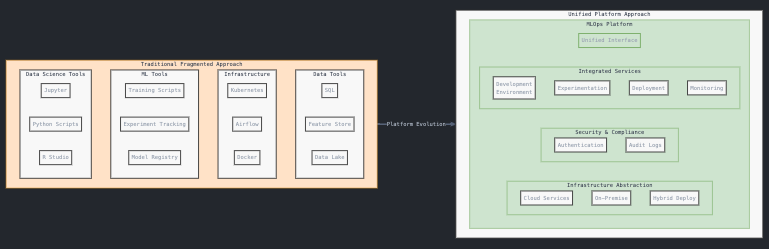

The Challenge of Tool Fragmentation in Enterprise ML

One of the most pressing challenges facing organizations today is the fragmentation of ML tooling. Teams often find themselves working with a variety of disconnected tools:

- Jupyter notebooks for experimentation

- Airflow or Kubeflow for orchestration

- Custom-built feature stores

- Various deployment solutions

This fragmentation creates silos between teams and introduces significant friction in the ML development lifecycle. Data scientists might be proficient in SQL and modeling but struggle with infrastructure management, while engineering teams grapple with maintaining consistency across different environments.

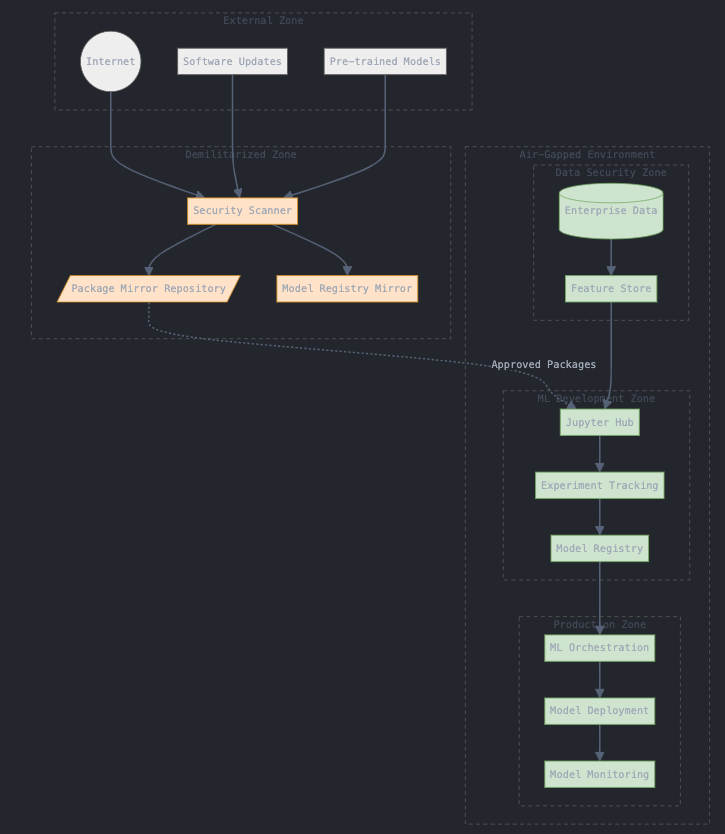

On-Premise Requirements in Emerging Markets

Unlike Western markets where cloud adoption is the norm, many organizations in emerging markets face strict requirements for on-premise deployments. This presents unique challenges:

- Need for air-gapped environments

- Data sovereignty requirements

- Limited access to cloud-native services

- Complex compliance requirements

These constraints make it crucial to design MLOps solutions that can function effectively in isolated environments while still maintaining modern development practices.

Bridging the Skills Gap

A recurring theme in MLOps adoption is the skills gap between data science and engineering teams. Organizations often have:

- Data scientists who excel at experimentation but struggle with production systems

- Engineers who understand infrastructure but aren't familiar with ML workflows

- Teams working in isolation, leading to friction in the development process

The key to addressing this gap lies in implementing tools and practices that abstract away complexity while maintaining flexibility and control.

The Path Forward: Platform-Based Approaches

To address these challenges, organizations are increasingly looking toward platform-based approaches that can:

- Unify disparate tools under a single interface

- Support both on-premise and hybrid deployments

- Abstract away infrastructure complexity

- Maintain security and compliance requirements

- Enable code reuse across different environments

Conclusion: Building for Scale and Flexibility

As organizations continue to mature in their ML operations, the focus should be on building systems that can scale while maintaining flexibility. The key is finding solutions that:

- Support both air-gapped and connected environments

- Enable gradual adoption without forcing complete infrastructure overhauls

- Provide abstraction layers that simplify operations without sacrificing control

- Allow teams to maintain their preferred tools while improving collaboration

The future of MLOps in emerging markets will likely see a hybrid approach, where organizations can maintain strict security requirements while still benefiting from modern ML development practices.

Whether you’re just starting your MLOps journey or looking to scale existing operations, the key is to focus on solutions that can adapt to your specific environmental constraints while enabling your teams to work effectively together.