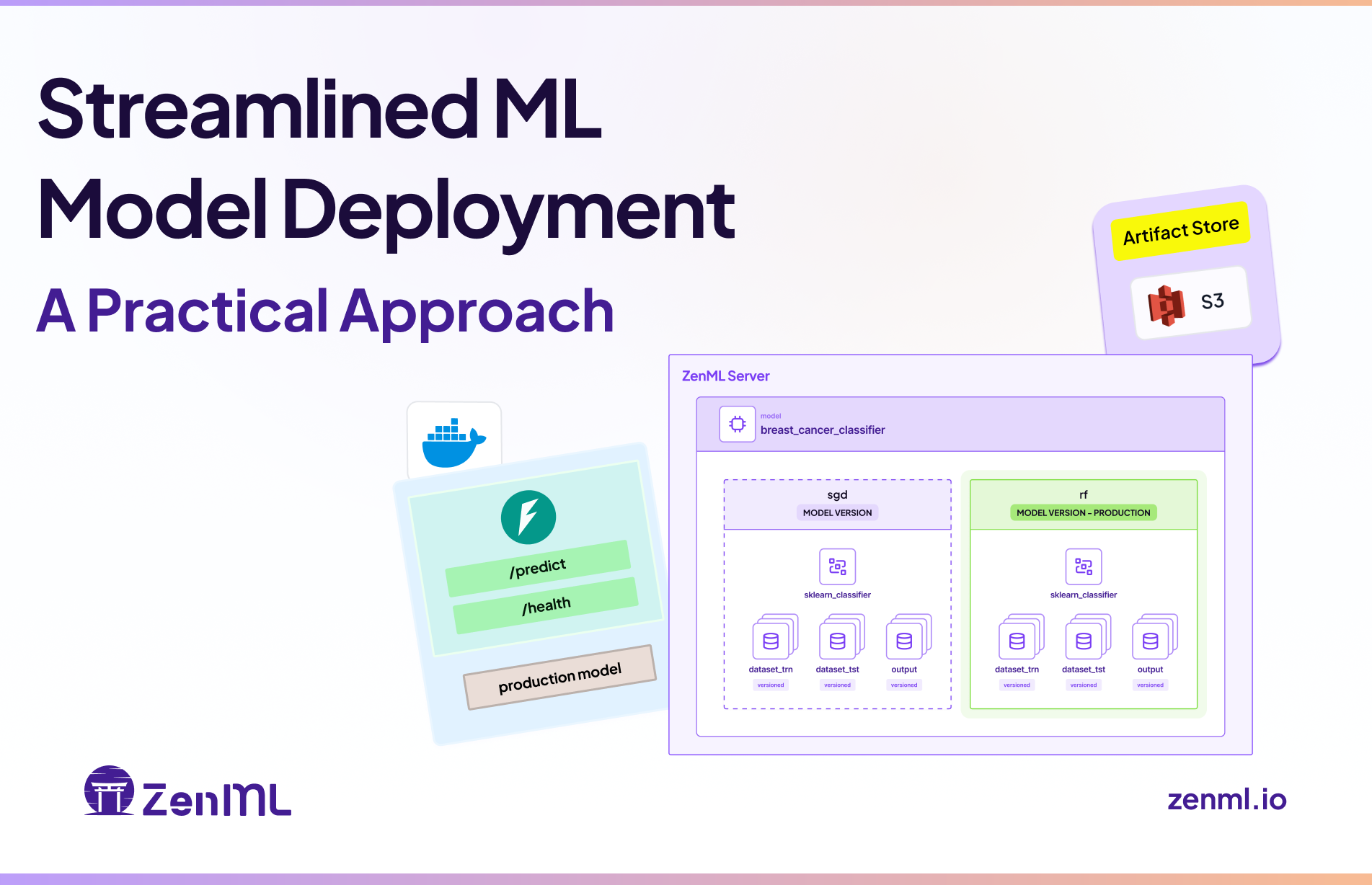

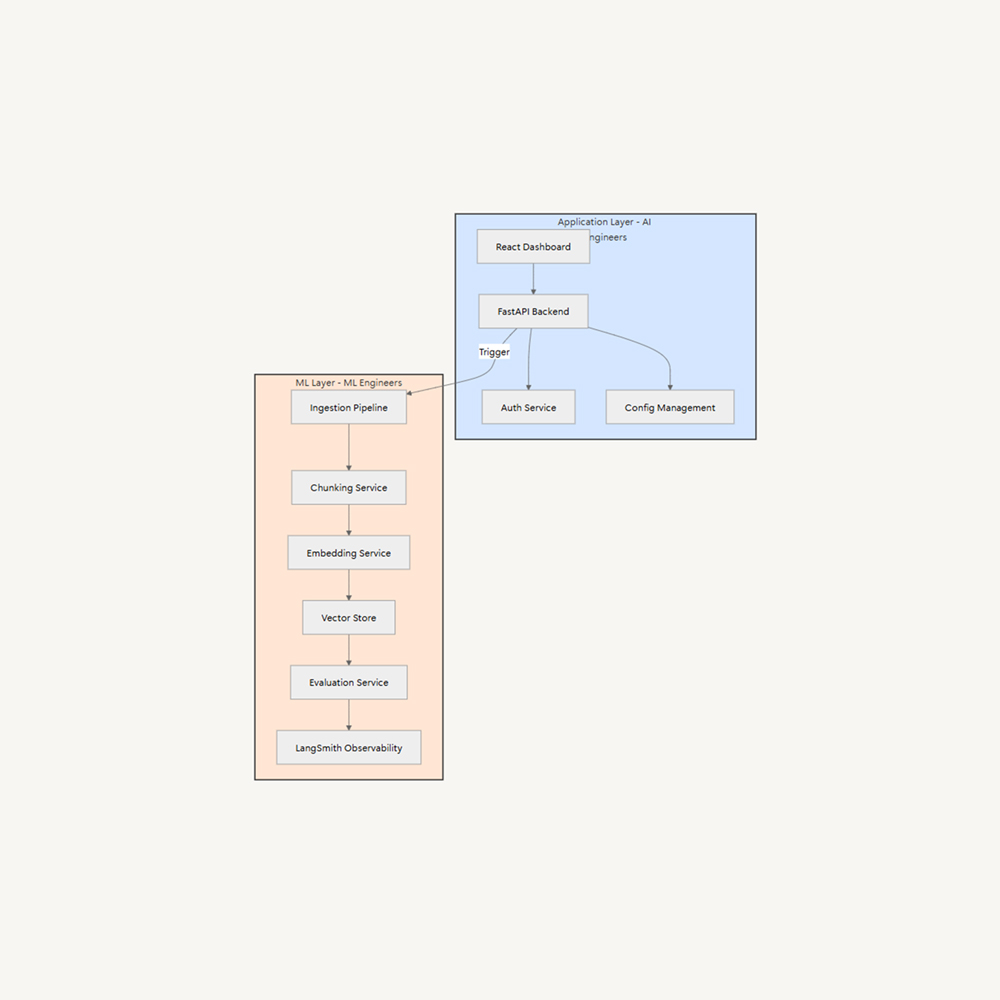

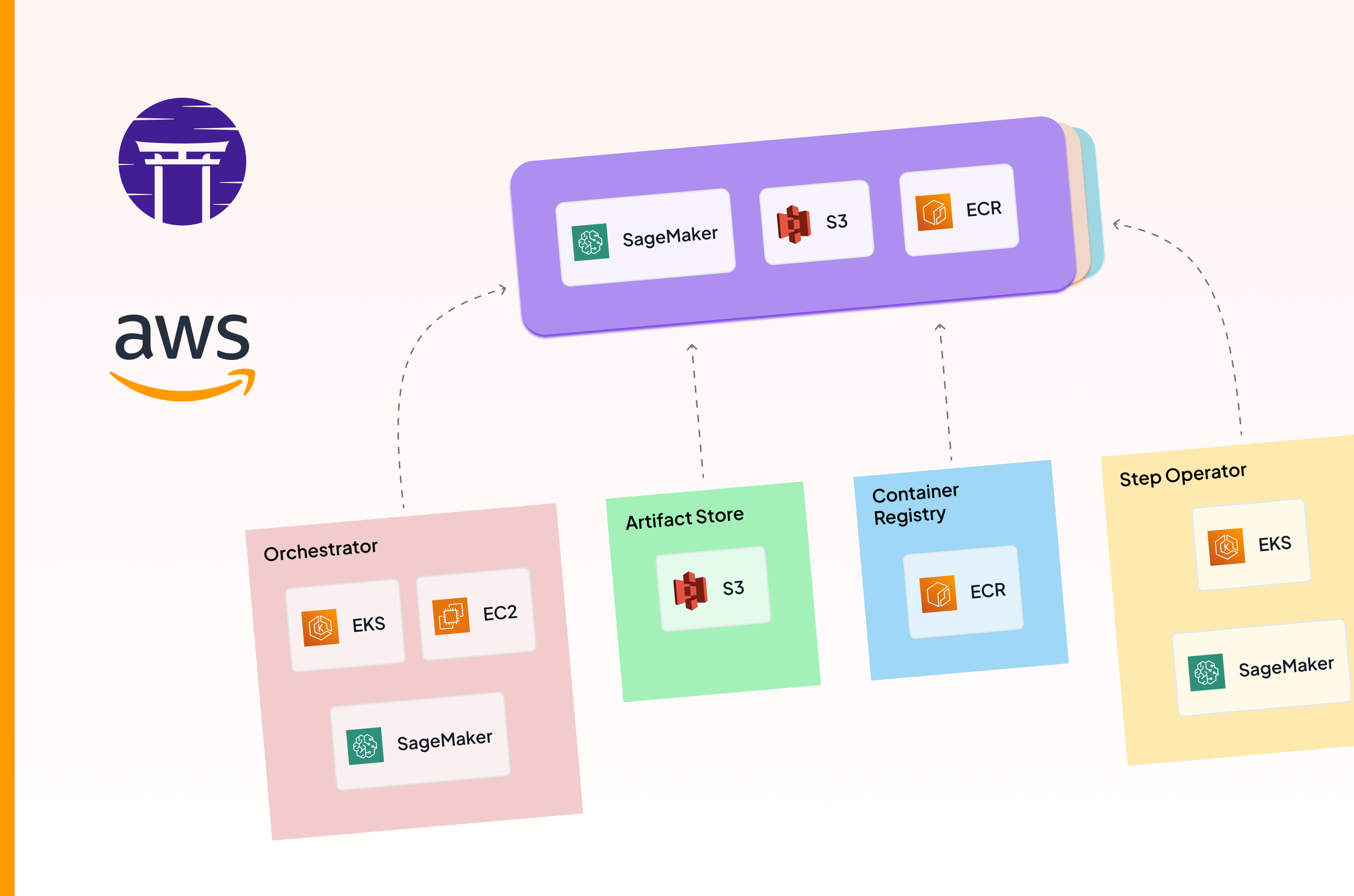

Machine Learning Operations (MLOps) is crucial in today's tech landscape, even with the rise of Large Language Models (LLMs). Implementing MLOps on AWS, leveraging services like SageMaker, ECR, S3, EC2, and EKS, can enhance productivity and streamline workflows. ZenML, an open-source MLOps framework, simplifies the integration and management of these services, enabling seamless transitions between AWS components. MLOps pipelines consist of Orchestrators, Artifact Stores, Container Registry, Model Deployers, and Step Operators. AWS offers a suite of managed services, such as ECR, S3, and EC2, but careful planning and configuration are required for a cohesive MLOps workflow.

![Why ML in production is (still) broken - [#MLOps2020]](https://assets.zenml.io/webflow/64a817a2e7e2208272d1ce30/79950cfa/65316d2bc051413294285f1e_mlopsworldthumbnail.png)