On this page

We’re excited to announce a new release of ZenML, 0.64.0, bringing significant improvements to streamline your MLOps workflows and expand cloud integration capabilities. Let’s dive into the key features of this update:

1. Notebook Integration for Remote Pipelines

ZenML now supports running steps defined in notebook cells with remote orchestrators and step operators. This feature bridges the gap between experimentation and production, allowing data scientists to seamlessly transition their work from local notebooks to remote environments. Whether you’re prototyping or deploying, ZenML adapts to your workflow.

2. Optimized Docker Builds with Code Uploads

We’ve introduced a game-changing option to upload code directly to the artifact store, enabling Docker build reuse. This feature is enabled by default and can significantly accelerate your development cycles, especially when working with remote stacks.

Key benefits include:

- Faster iteration and development

- No need to register a code repository for build reuse

- Builds only occur when requirements or

DockerSettingschange

To disable this feature, set DockerSettings.allow_download_from_artifact_store=False for steps or pipelines. For more details on which files are built into the image, check our updated documentation.

3. AzureML Orchestrator Support

Expanding our cloud platform support, ZenML now integrates with AzureML as an orchestrator. This addition caters to teams leveraging Microsoft Azure for their machine learning infrastructure. For a comprehensive guide on setting up an Azure stack, refer to our full Azure guide in the documentation.

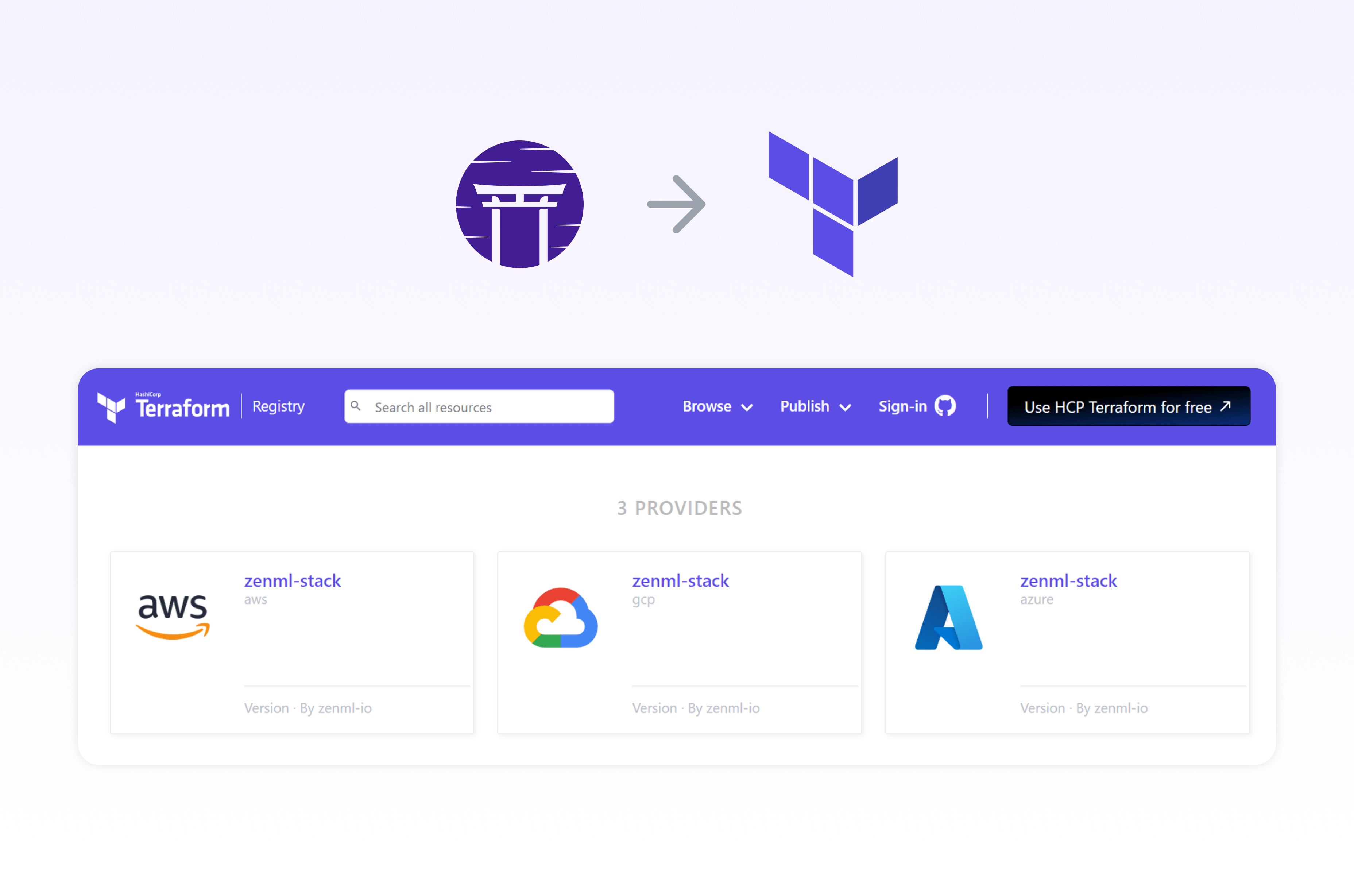

4. Terraform Modules for MLOps Stacks

We’re thrilled to announce the release of new Terraform modules on the Hashicorp registry. These modules enable the provisioning of complete MLOps stacks across major cloud providers, offering:

- Automated infrastructure setup for ZenML stack deployment

- Streamlined registration of configurations to the ZenML server

For an in-depth look at how these Terraform modules can revolutionize your MLOps infrastructure, check out our recent blog post: “Infrastructure as Code (IaC) for MLOps with Terraform & ZenML”.

Conclusion

This release represents a significant step forward in our mission to simplify and enhance MLOps workflows. By introducing notebook integration for remote pipelines, optimizing Docker builds, expanding cloud support, and providing Terraform modules, we’re empowering data science teams to work more efficiently and effectively.

We encourage you to update to the latest version of ZenML and explore these new features. As always, we welcome your feedback and look forward to seeing how these improvements accelerate your ML projects.

Happy coding!