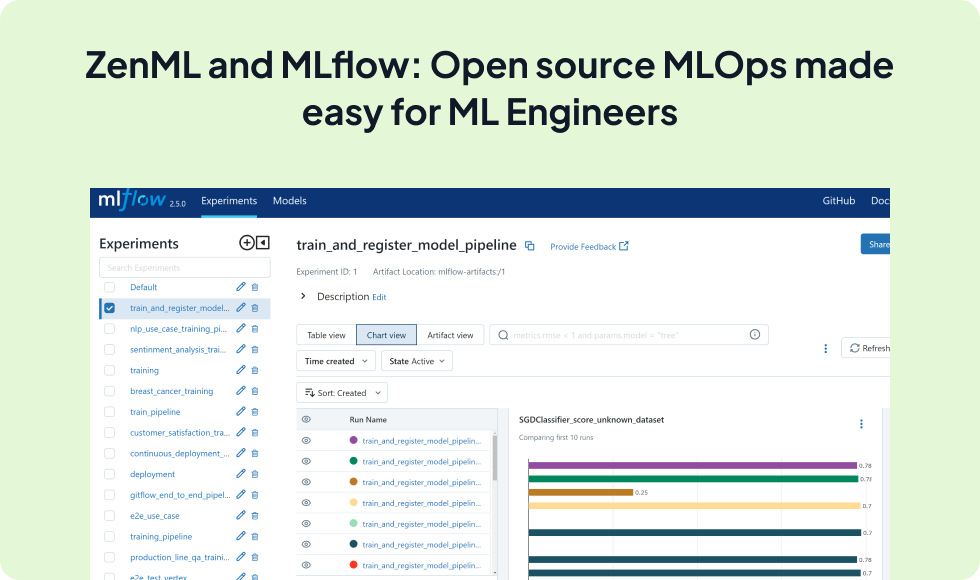

Integrate the power of MLflow's experiment tracking capabilities directly into your ZenML pipelines. Effortlessly log and visualize models, parameters, metrics, and artifacts produced by your pipeline steps, enhancing reproducibility and collaboration across your ML workflows.

import numpy as np

from sklearn.ensemble import RandomForestClassifier

from sklearn.base import BaseEstimator

from sklearn.datasets import load_iris

from zenml import pipeline, step

from sklearn.model_selection import train_test_split

from sklearn.metrics import accuracy_score

import mlflow

@step(experiment_tracker="mlflow_tracker")

def train_model() -> BaseEstimator:

mlflow.autolog()

iris = load_iris()

X_train, X_test, y_train, y_test = train_test_split(

iris.data, iris.target, test_size=0.2, random_state=42

)

model = RandomForestClassifier()

model.fit(X_train, y_train)

y_pred = model.predict(X_test)

mlflow.log_param("n_estimators", model.n_estimators)

mlflow.log_metric("train_accuracy", accuracy_score(y_test, y_pred))

return model

@pipeline(enable_cache=False)

def training_pipeline():

train_model()

training_pipeline()Expand your ML pipelines with more than 50 ZenML Integrations