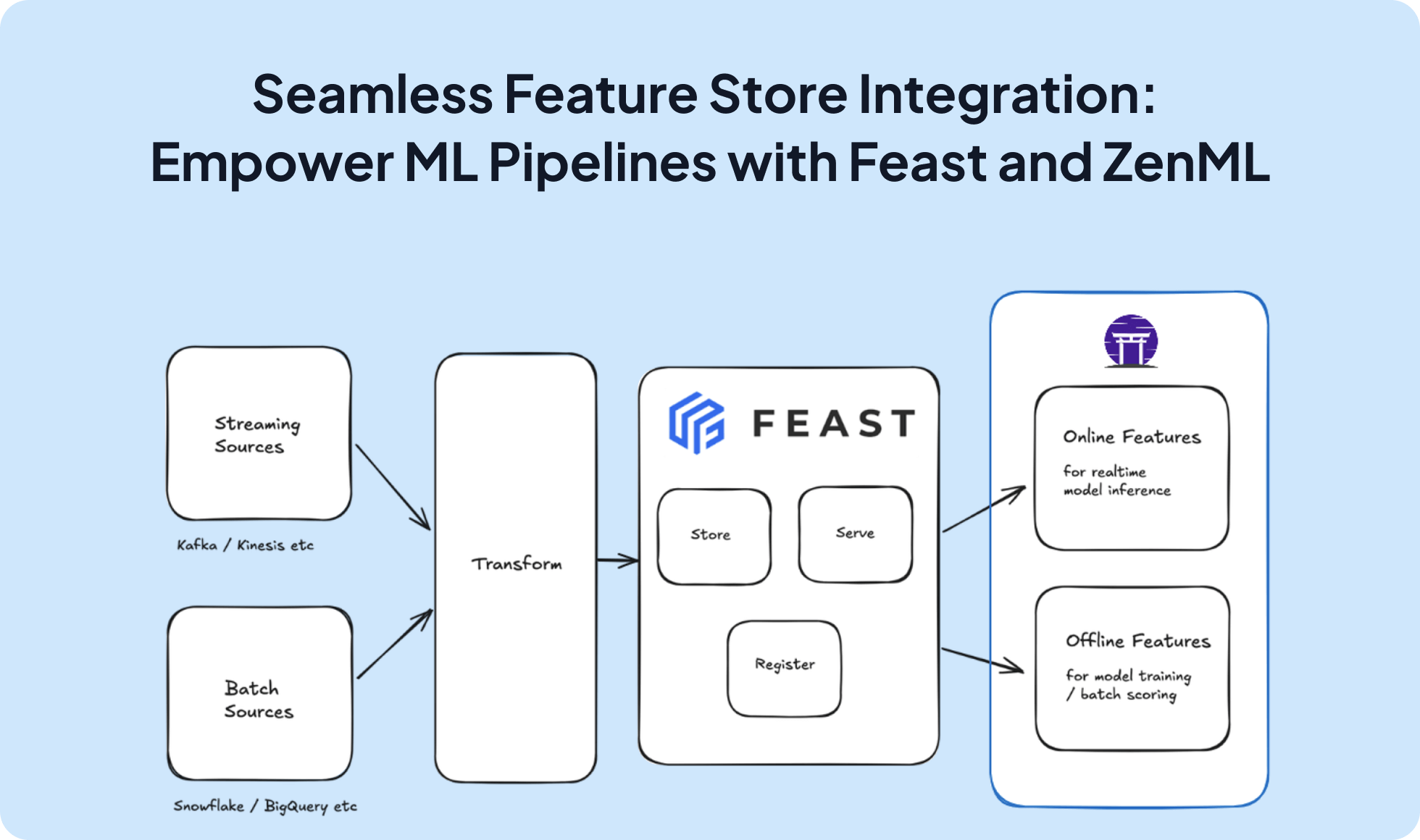

Enhance your machine learning workflows by integrating Feast, a powerful feature store, with ZenML. This integration enables efficient management and serving of features for model training and inference, streamlining the ML pipeline process.

# First register the feature store and a stack

# zenml feature-store register feast_store --flavor=feast --feast_repo="<PATH/TO/FEAST/REPO>"

# zenml stack register ... -f feast_store

from zenml import step, pipeline

from zenml.client import Client

@step

def get_historical_features(

entity_dict, features, full_feature_names=False

):

feature_store = Client().active_stack.feature_store

entity_df = pd.DataFrame.from_dict(entity_dict)

return feature_store.get_historical_features(

entity_df=entity_df,

features=features,

full_feature_names=full_feature_names,

)

@pipeline

def my_pipeline():

my_features = get_historical_features(

entity_dict={"driver_id": [1001, 1002, 1003]},

features=["driver_hourly_stats:conv_rate", "driver_hourly_stats:acc_rate"]

)

# use features in downstream steps

# also use the CLI for Feast metadata etc

# zenml feature-store feature get-entities

# zenml feature-store feature get-data-sources

# ...

Expand your ML pipelines with more than 50 ZenML Integrations