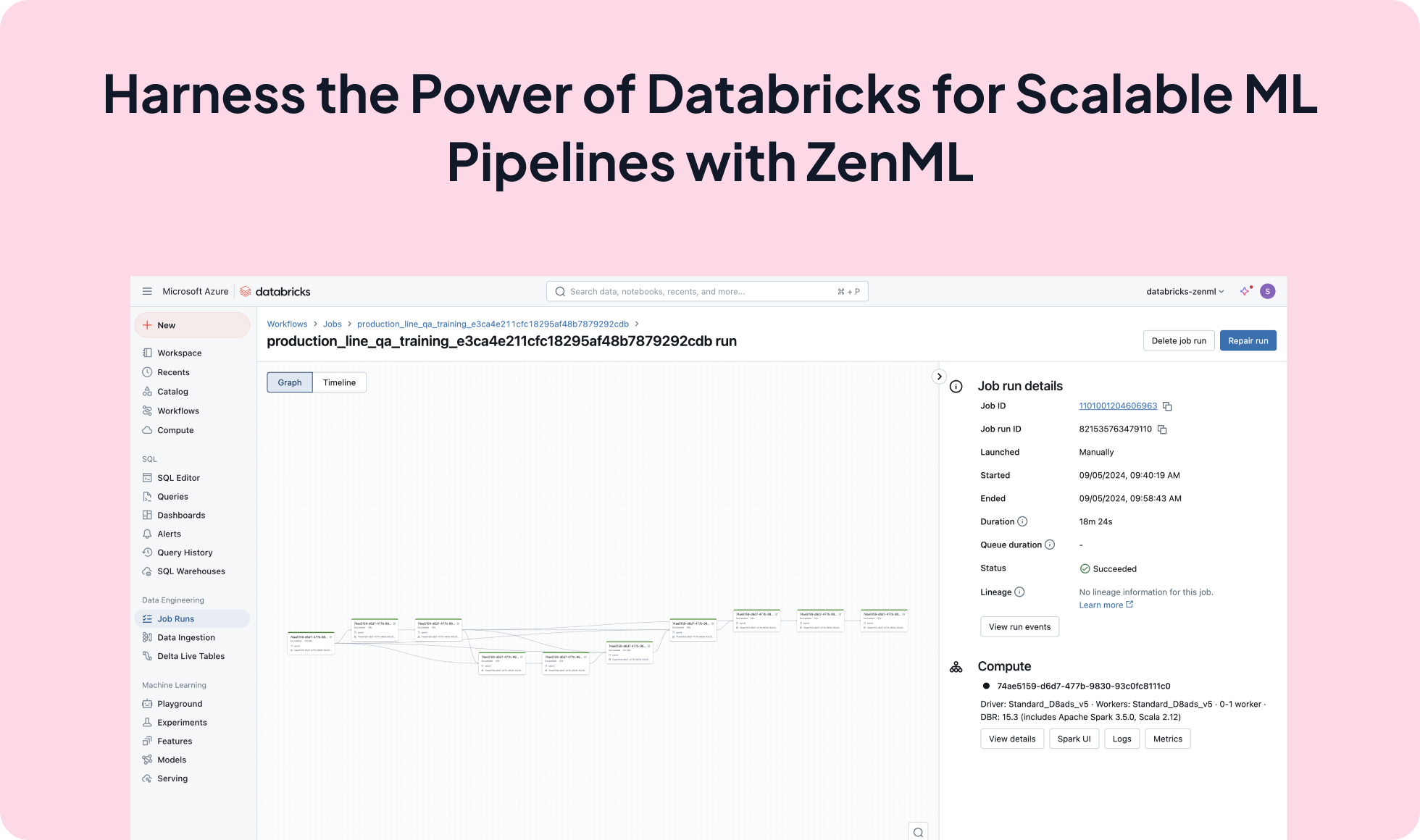

Seamlessly integrate ZenML with Databricks to leverage its distributed computing capabilities for efficient and scalable machine learning workflows. This integration enables data scientists and engineers to run their ZenML pipelines on Databricks, taking advantage of its optimized environment for big data processing and ML workloads.

from zenml.integrations.databricks.flavors.databricks_orchestrator_flavor import DatabricksOrchestratorSettings

databricks_settings = DatabricksOrchestratorSettings(

spark_version="15.3.x-scala2.12",

num_workers="3",

node_type_id="Standard_D4s_v5",

policy_id=POLICY_ID,

autoscale=(2, 3),

)

@pipeline(

settings={

"orchestrator.databricks": databricks_settings,

}

)

def my_pipeline():

load_data()

preprocess_data()

train_model()

evaluate_model()

my_pipeline().run()

Expand your ML pipelines with more than 50 ZenML Integrations