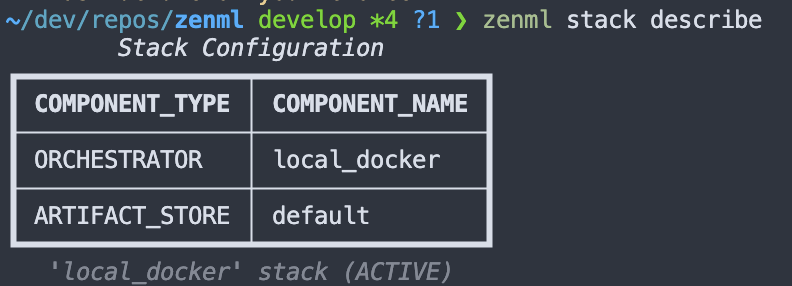

Integrate ZenML with Docker to execute your ML pipelines in isolated environments locally. This integration simplifies debugging and ensures consistent execution across different systems.

from zenml import step, pipeline

from zenml.orchestrators.local_docker.local_docker_orchestrator import (

LocalDockerOrchestratorSettings,

)

@step

def preprocess_data():

# Preprocessing logic here

pass

@step

def train_model():

# Model training logic here

pass

settings = {

"orchestrator.local_docker": LocalDockerOrchestratorSettings(

run_args={"cpu_count": 2}

)

}

@pipeline(settings=settings)

def ml_pipeline():

data = preprocess_data()

train_model(data)

if __name__ == "__main__":

ml_pipeline()

Expand your ML pipelines with more than 50 ZenML Integrations