| Workflow Orchestration |

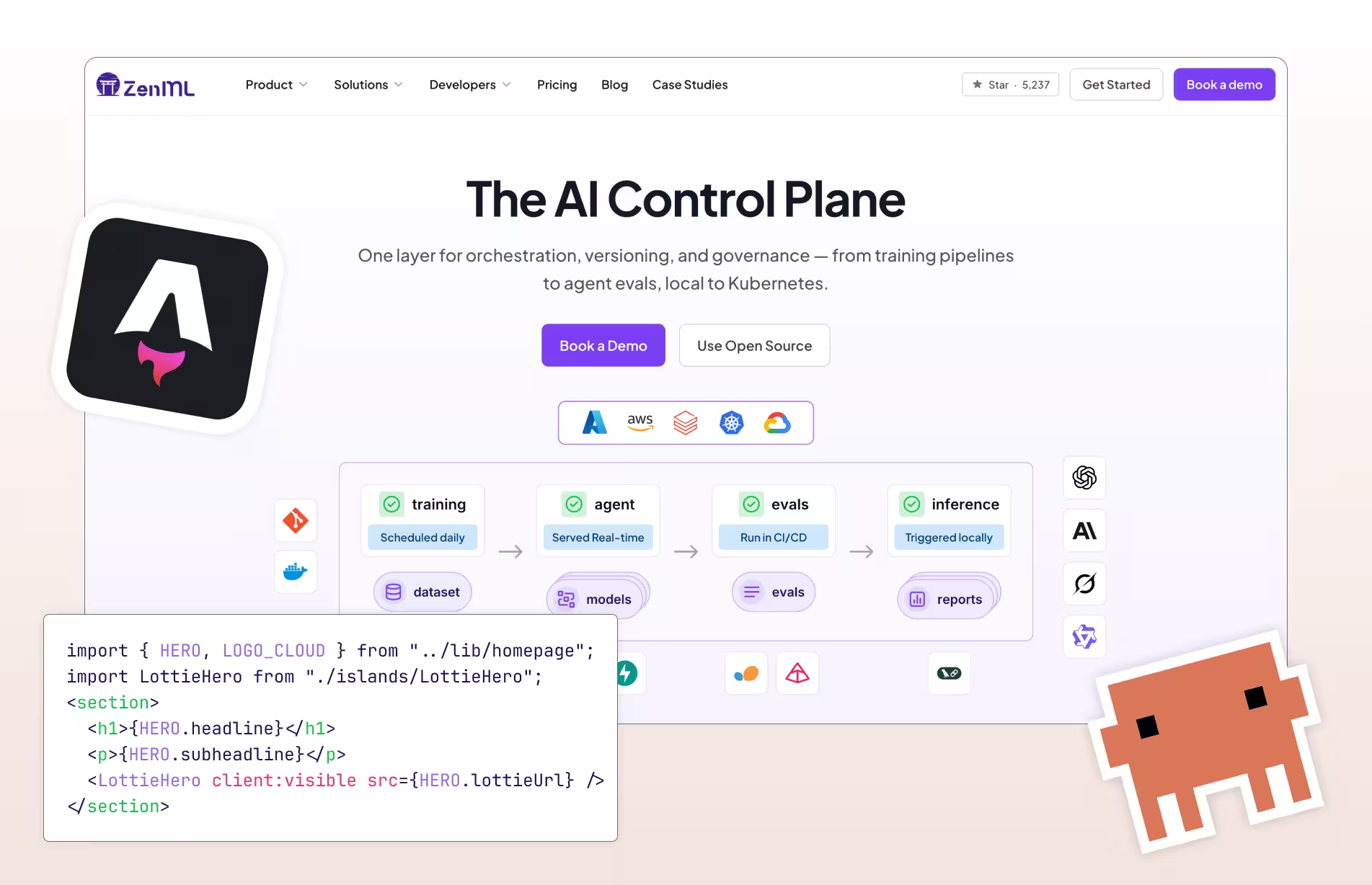

Purpose-built ML pipeline orchestration with pluggable backends — Airflow, Kubeflow, Kubernetes, and more

|

First-class DAG pipelines built from versioned components with UI, CLI, and SDK authoring plus built-in scheduling within Azure

|

| Integration Flexibility |

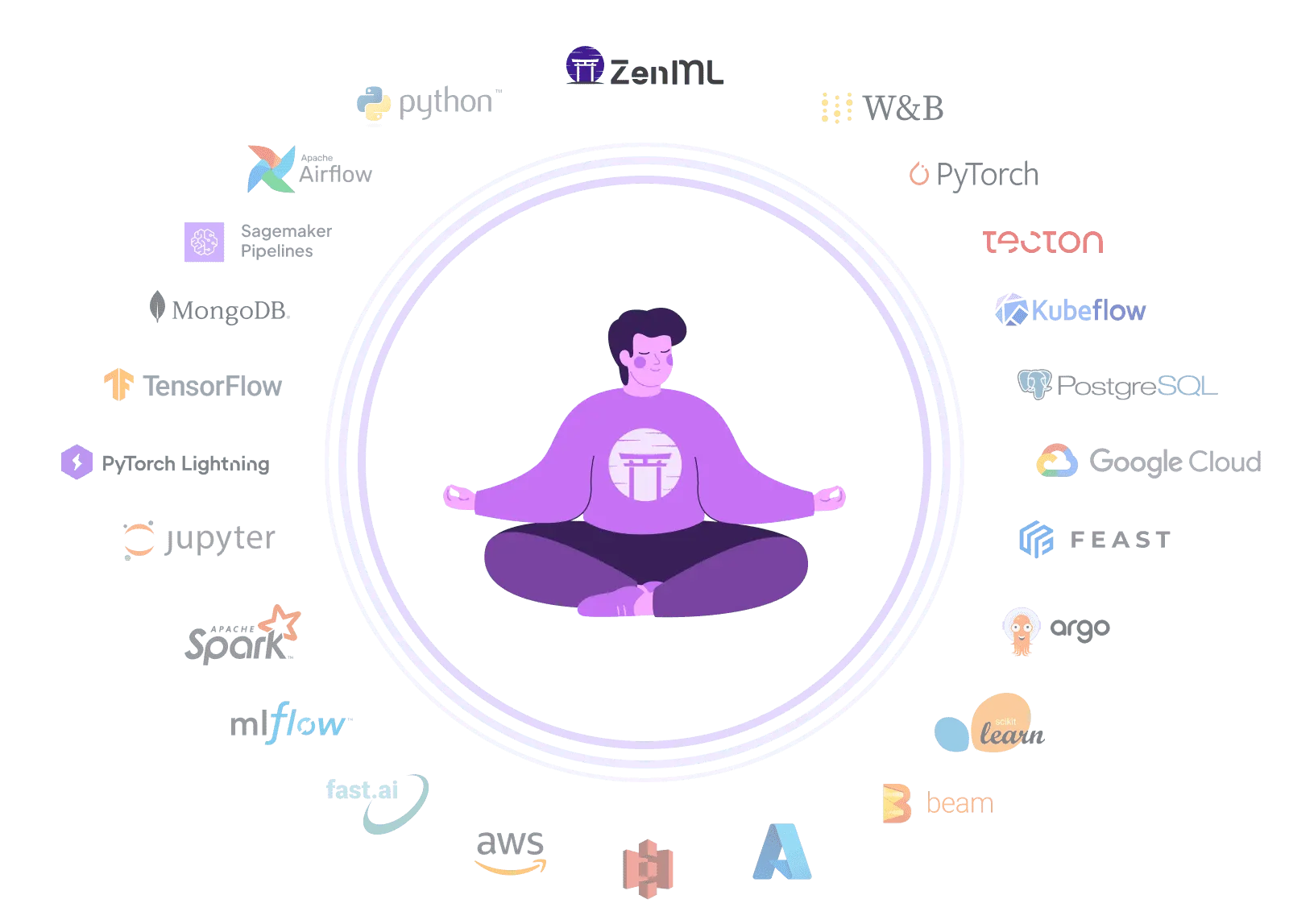

Composable stack with 50+ MLOps integrations — swap orchestrators, trackers, and deployers without code changes

|

Integrates well with Azure-native services and supports MLflow, but not built as a neutral integration hub for non-Azure tools

|

| Vendor Lock-In |

Open-source Python pipelines run anywhere — switch clouds, orchestrators, or tools without rewriting code

|

Fundamentally an Azure service — Arc-enabled K8s extends compute reach but governance and asset control remain tied to Azure workspaces

|

| Setup Complexity |

pip install zenml — start building pipelines in minutes with zero infrastructure, scale when ready

|

Initial setup requires workspace provisioning, IAM/RBAC, networking, and dependent Azure services like Storage, ACR, and Key Vault

|

| Learning Curve |

Python-native API with decorators — familiar to any ML engineer or data scientist who writes Python

|

Broad concept set (assets, resources, components, jobs, endpoints, registries) plus v1/v2 ecosystem fragmentation slows time-to-productivity

|

| Scalability |

Delegates compute to scalable backends — Kubernetes, Spark, cloud ML services — for unlimited horizontal scaling

|

Enterprise-scale managed training and inference on Azure compute, plus hybrid Kubernetes compute targets for large regulated deployments

|

| Cost Model |

Open-source core is free — pay only for your own infrastructure, with optional managed cloud for enterprise features

|

No separate platform fee, but total cost includes compute plus multiple Azure services (Storage, ACR, Key Vault, monitoring) that can be hard to predict

|

| Collaboration |

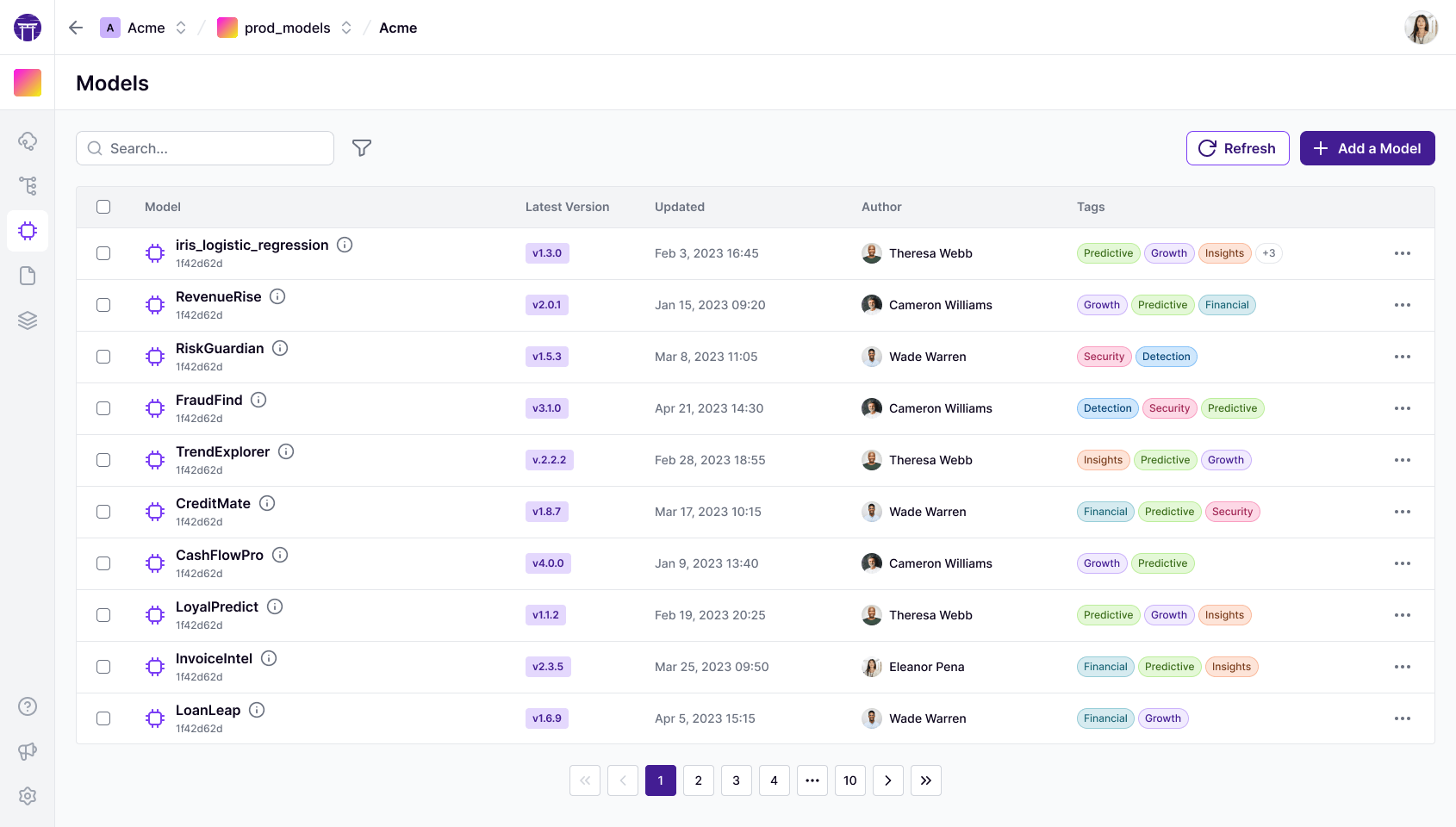

Code-native collaboration through Git, CI/CD, and code review — ZenML Pro adds RBAC, workspaces, and team dashboards

|

Workspaces, registries, and Azure RBAC make collaboration first-class — assets can be centrally managed and replicated across regions

|

| ML Frameworks |

Use any Python ML framework — TensorFlow, PyTorch, scikit-learn, XGBoost, LightGBM — with native materializers and tracking

|

Supports broad ML/DL workflows including no-code AutoML options and arbitrary training routines packaged as components or jobs

|

| Monitoring |

Integrates Evidently, WhyLogs, and other monitoring tools as stack components for automated drift detection and alerting

|

Deep Azure Monitor integration for endpoint metrics and logs, plus model monitoring with data drift signals and alerting via Event Grid

|

| Governance |

ZenML Pro provides RBAC, SSO, workspaces, and audit trails — self-hosted option keeps all data in your own infrastructure

|

Inherits Azure's enterprise governance model with RBAC, managed identities, registries with fine-grained permissions, and centralized asset management

|

| Experiment Tracking |

Native metadata tracking plus seamless integration with MLflow, Weights & Biases, Neptune, and Comet for rich experiment comparison

|

MLflow-compatible workspaces with automatic job metadata tracking — Azure ML recommends MLflow for metrics and params logging

|

| Reproducibility |

Automatic artifact versioning, code-to-Git linking, and containerized execution guarantee reproducible pipeline runs

|

Jobs automatically track code, environment, and inputs/outputs — versioned components and registries enable durable asset reuse across workspaces

|

| Auto-Retraining |

Schedule pipelines via any orchestrator or use ZenML Pro event triggers for drift-based automated retraining workflows

|

Supports scheduling pipeline jobs for routine retraining via UI, CLI, and SDK — though v2 schedules do not support event-based triggers

|