| Workflow Orchestration |

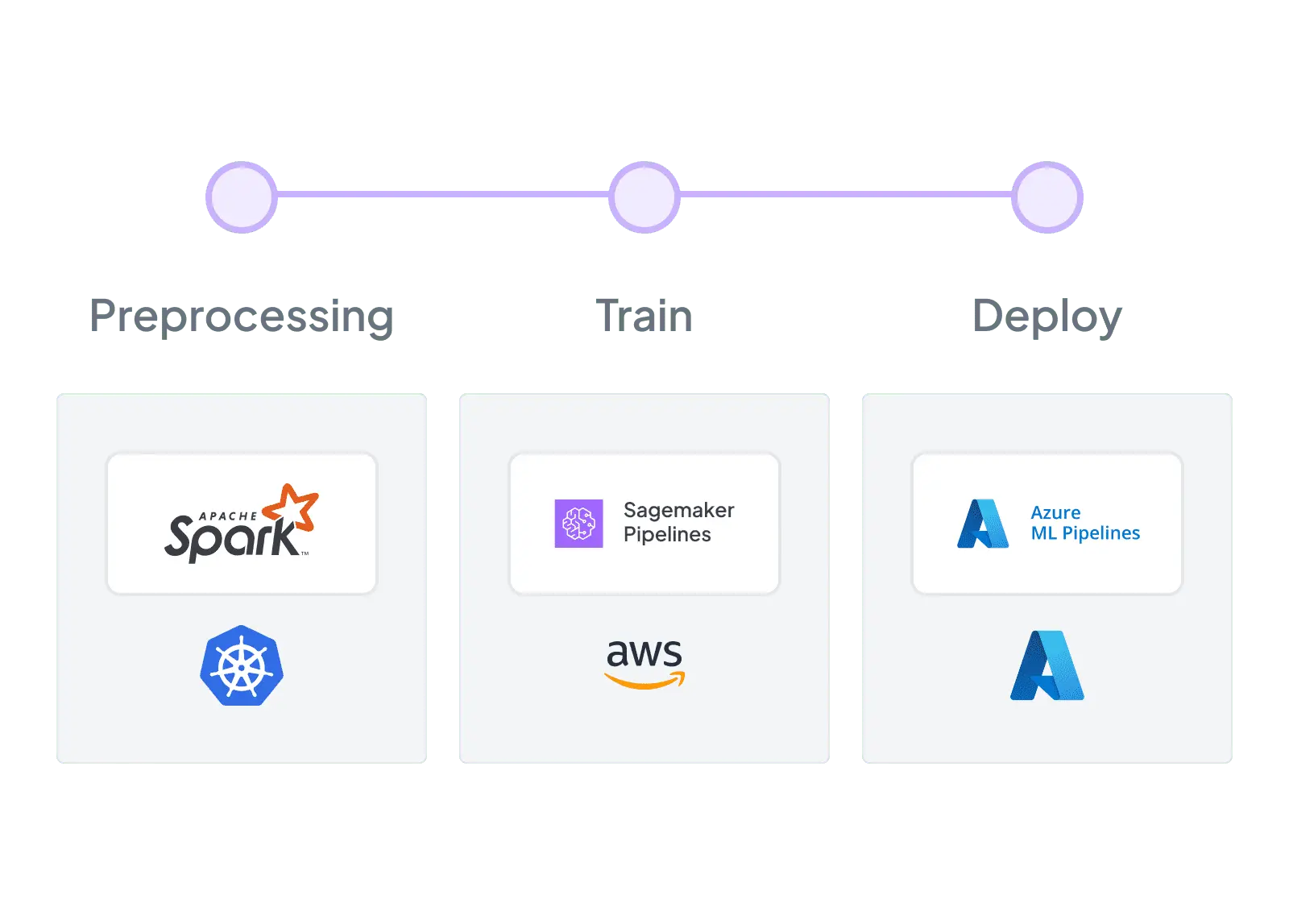

Purpose-built ML pipeline orchestration with pluggable backends — Airflow, Kubeflow, Kubernetes, Vertex AI, and more

|

Vertex AI Pipelines is a managed, production-grade orchestrator for containerized ML workflows on GCP with console visibility and lifecycle tracking

|

| Integration Flexibility |

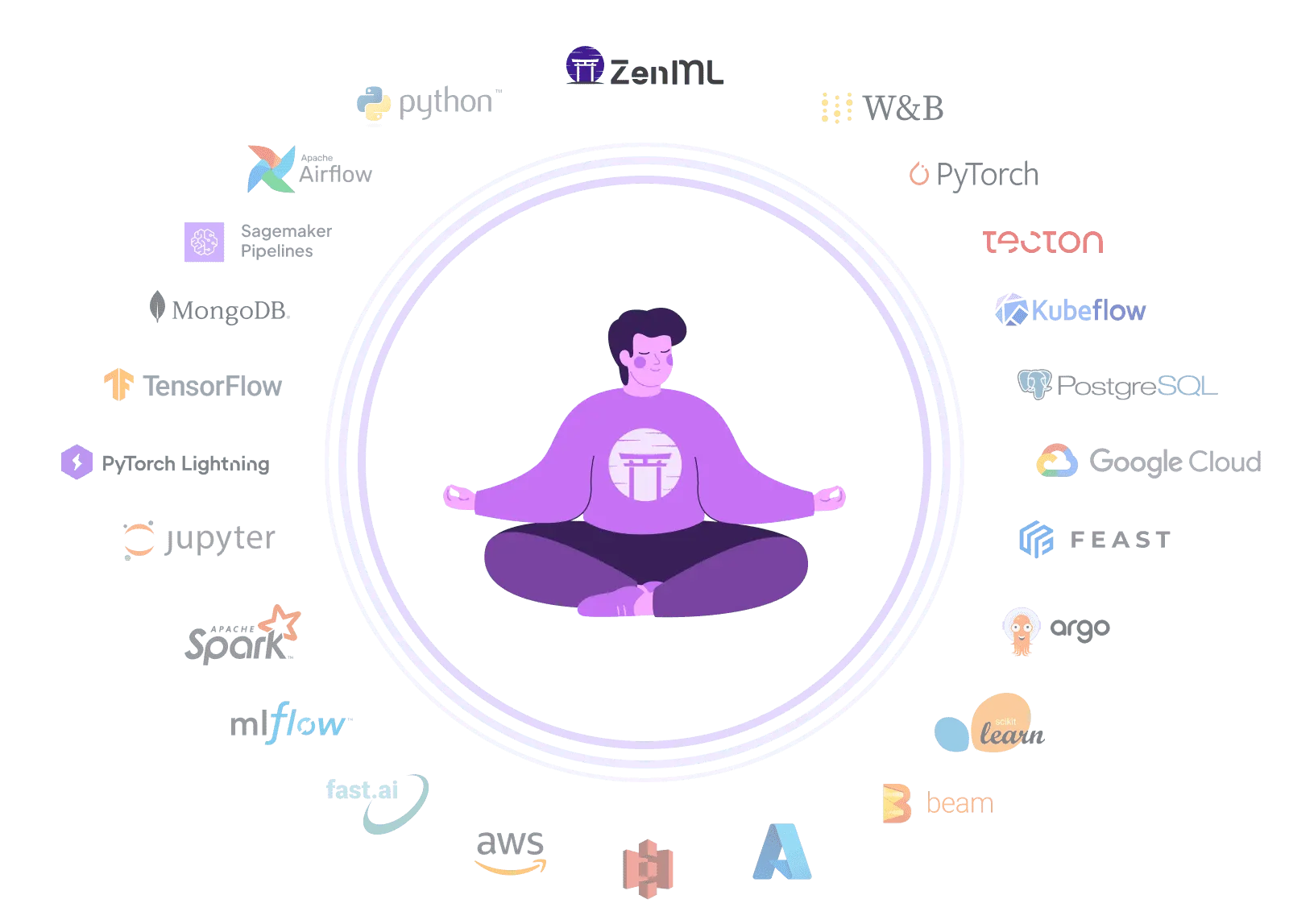

Composable stack with 50+ MLOps integrations — swap orchestrators, trackers, and deployers without code changes

|

Deep integration within GCP via Google Cloud Pipeline Components, but no cloud-agnostic integration model for non-GCP tools

|

| Vendor Lock-In |

Open-source Python pipelines run anywhere — switch clouds, orchestrators, or tools without rewriting code

|

Runs inside a GCP project/region with GCP identity and GCS storage — migration typically means re-platforming the entire pipeline stack

|

| Setup Complexity |

pip install zenml — start building pipelines in minutes with zero infrastructure, scale when ready

|

Managed service eliminates infrastructure setup — configure GCP project, IAM, and storage to get production-grade pipelines running

|

| Learning Curve |

Python-native API with decorators — familiar to any ML engineer or data scientist who writes Python

|

Requires learning KFP component/pipeline DSL, compilation workflows, containerization patterns, and GCP resource concepts

|

| Scalability |

Delegates compute to scalable backends — Kubernetes, Spark, cloud ML services — for unlimited horizontal scaling

|

Enterprise-scale workloads on GCP — orchestrates large training/processing jobs using Google-managed Vertex, BigQuery, and Dataflow services

|

| Cost Model |

Open-source core is free — pay only for your own infrastructure, with optional managed cloud for enterprise features

|

Documented per-run pipeline fee ($0.03/run) plus underlying compute costs — Google provides cost labeling and billing export for transparency

|

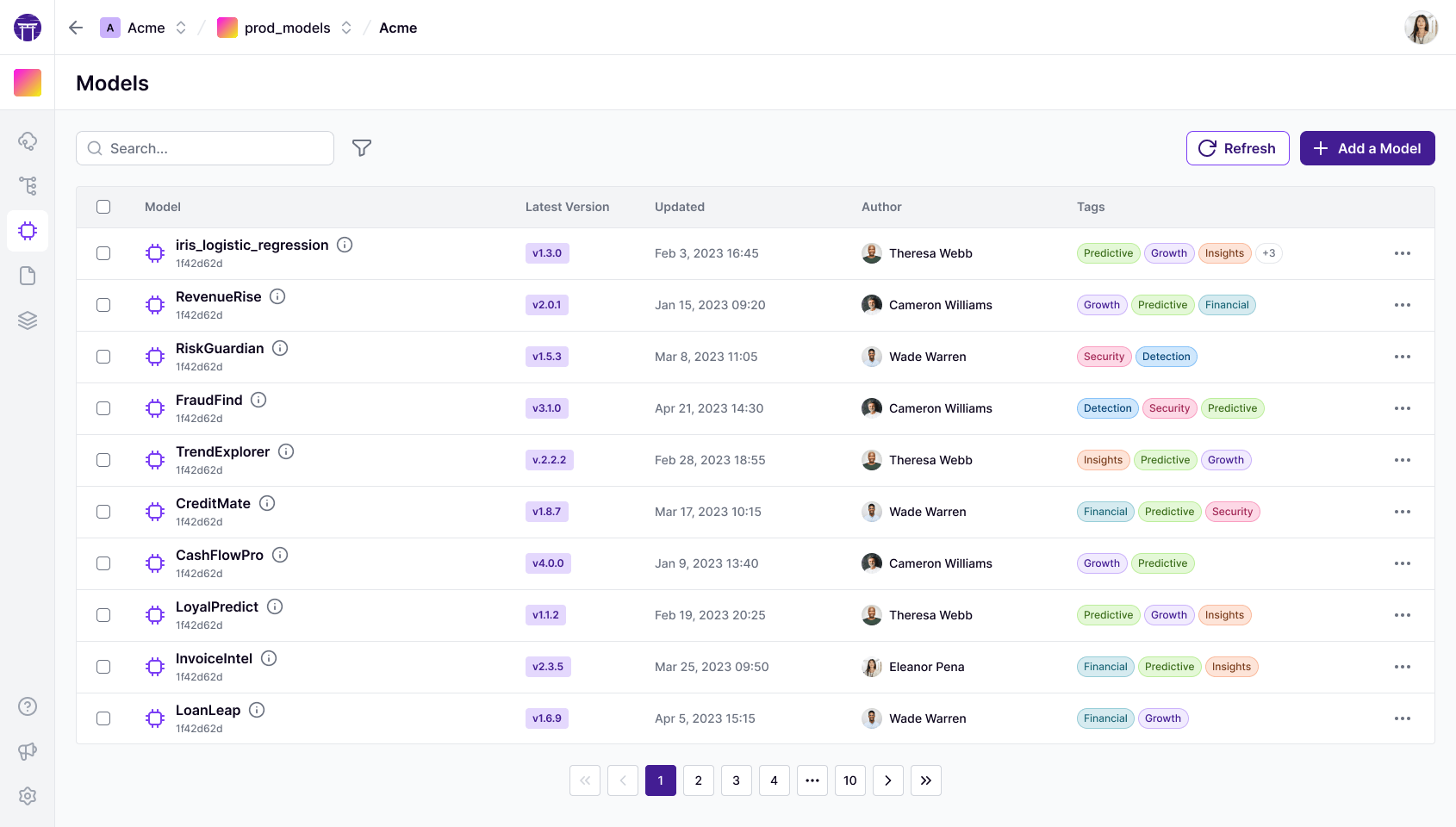

| Collaboration |

Code-native collaboration through Git, CI/CD, and code review — ZenML Pro adds RBAC, workspaces, and team dashboards

|

Collaborative use through shared GCP projects, IAM-based access control, and console-based visibility into runs and metadata

|

| ML Frameworks |

Use any Python ML framework — TensorFlow, PyTorch, scikit-learn, XGBoost, LightGBM — with native materializers and tracking

|

Broad framework support via custom containers and prebuilt container images for common frameworks including PyTorch and TensorFlow

|

| Monitoring |

Integrates Evidently, WhyLogs, and other monitoring tools as stack components for automated drift detection and alerting

|

Vertex AI Model Monitoring provides scheduled monitoring jobs with alerting when model quality metrics cross defined thresholds

|

| Governance |

ZenML Pro provides RBAC, SSO, workspaces, and audit trails — self-hosted option keeps all data in your own infrastructure

|

Enterprise governance via GCP IAM, network controls, billing attribution, and VPC support for pipeline-launched resources

|

| Experiment Tracking |

Native metadata tracking plus seamless integration with MLflow, Weights & Biases, Neptune, and Comet for rich experiment comparison

|

Vertex AI Experiments tracks hyperparameters, environments, and results with SDK and console support built on Vertex ML Metadata

|

| Reproducibility |

Automatic artifact versioning, code-to-Git linking, and containerized execution guarantee reproducible pipeline runs

|

Pipeline templates plus Vertex ML Metadata record artifacts and lineage graphs — strong primitives for reproducing ML workflows on GCP

|

| Auto-Retraining |

Schedule pipelines via any orchestrator or use ZenML Pro event triggers for drift-based automated retraining workflows

|

Vertex AI scheduler API supports one-time or recurring pipeline runs for continuous training patterns within GCP

|