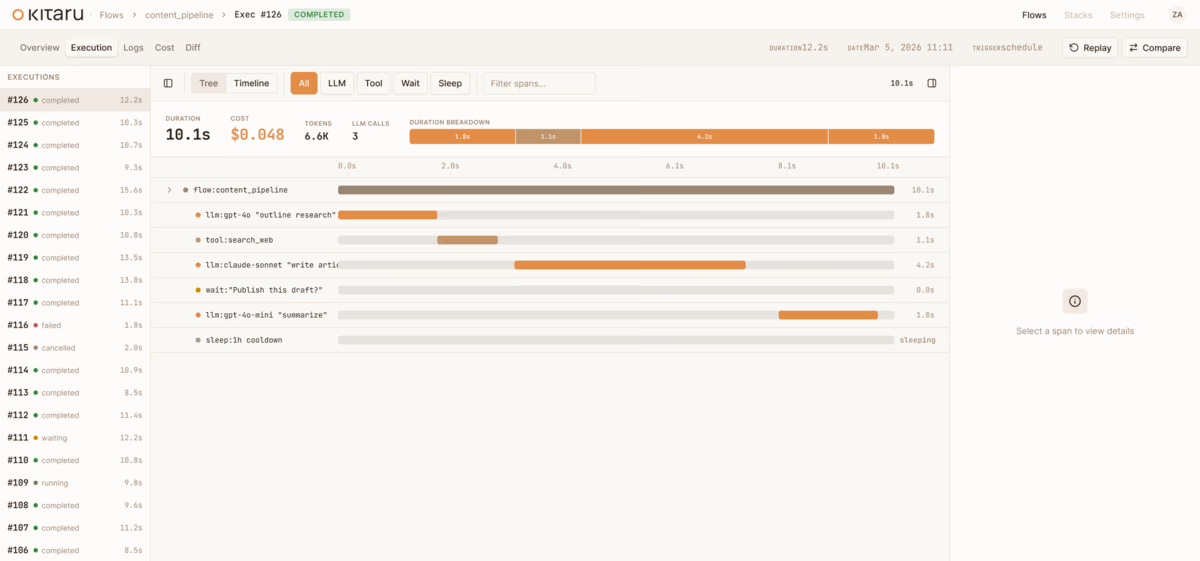

Introducing Kitaru: Open Source Infrastructure For Asynchronous Agents (Built by the ZenML Team)

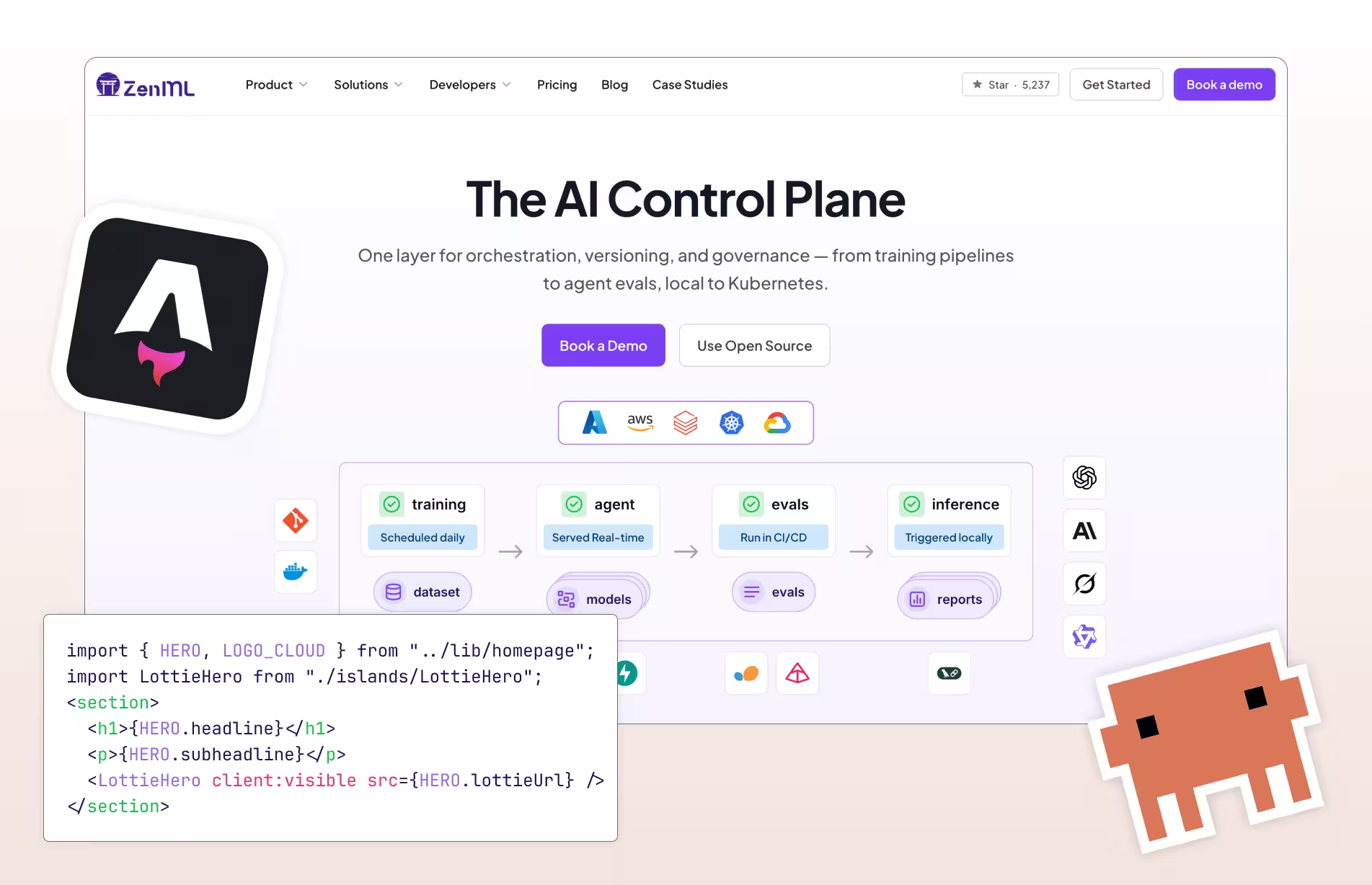

Meet Kitaru — open source durable execution for Python agents, built by the ZenML team. Crash recovery, human-in-the-loop, and replay from any checkpoint.