Sandbox Showdown: E2B vs Daytona (A Guide for Platform Engineers)

In this E2B vs Daytona guide, you will learn about how these two compare across sandbox lifecycle management, output handling, pricing, and more.

76 posts in this category

In this E2B vs Daytona guide, you will learn about how these two compare across sandbox lifecycle management, output handling, pricing, and more.

In this article, you learn about the best E2B alternatives to deploy AI sandboxes. We break down 10 options covering isolation, execution, pricing, and real-world agent workloads.

In this article, you learn about the best PromptLayer alternatives to version, test, and monitor prompts in ML workflows.

In this article, we compare n8n vs Make and understand if no-code workflow automations are as efficient as code-based frameworks or not.

Discover the 11 best LLMOps platforms to build AI agents and workflows.

Analysis of 1,200 production LLM deployments reveals six key patterns separating successful teams from those stuck in demo mode: context engineering over prompt engineering, infrastructure-based guardrails, rigorous evaluation practices, and the recognition that software engineering fundamentals—not frontier models—remain the primary predictor of success.

Explore 419 new real-world LLMOps case studies from the ZenML database, now totaling 1,182 production implementations—from multi-agent systems to RAG.

In this article, you learn about the best Promptfoo alternatives that help you ship better AI agents.

Discover the 9 best prompt monitoring tools for ML and AI engineering teams.

Discover the 10 best LLM monitoring tools you can use this year.

In this article, you will learn about the best DeepEval alternatives that you can use for LLM evaluation.

In this Langfuse vs Phoenix guide, we conclude which open-source framework fits your LLMs stack by comparing features, integration, and pricing.

In this article, you learn about the best Langfuse alternatives for tracing, eval, prompt management, and metrics for LLM apps.

In this article, you learn about the best LangSmith alternatives you can use for full-stack observability.

In this Langfuse vs LangSmith, we conclude which observability platforms fit your LLMs stack by comparing features, integration, and pricing.

In this Pydantic AI vs CrewAI, we discuss which one is better at building production-grade workflows with generative AI.

In this article, you will learn about the best AutoGPT alternatives to run your AI assistants flawlessly.

In this article, you learn about the best AutoGen alternatives to build AI agents and applications.

Discover the 9 best LLM orchestration frameworks for agents and RAG.

Discover the 9 best LLM evaluation tools to test your AI models before going live.

In this Langflow vs n8n, we compare both platforms’ features, pricing, and integrations.

Discover the 9 best data embedding models for RAG pipelines you build this year.

Discover the 10 best data vector databases for RAG pipelines.

In this Smolagents vs LangGraph, we explain the difference between the two and conclude which one is the best to build AI agents.

In this Haystack vs LlamaIndex, we explain the difference between the two and conclude which one is the best to build AI agents.

In this Google ADK vs LangGraph, we explain the difference between the two and conclude which one is the best to develop and deploy AI agents.

In this Agno vs LangGraph, we explain the difference between the two and conclude which one is the best to build multi-agent systems.

In this Pydantic AI vs LangGraph, we explain the difference between the two and conclude which one is the best to build AI agents.

In this Vellum AI pricing guide, we discuss the costs, features, and value Vellum AI provides to help you decide if it’s the right investment for your business.

Discover the best LLM observability tools currently on the market to build agentic AI workflows.

In this LlamaIndex vs LangChain, we explain the difference between the two and conclude which one is the best to build AI agents.

Discover the top 7 Flowise alternatives - code and no-code that you can leverage to build and deploy efficient AI agents.

Discover the top 8 Botpress alternatives - code and no-code that you can leverage as a complete AI agent platform.

In this LlamaIndex vs CrewAI, we explain the difference between the two and conclude which one is the best to build AI agents.

Discover the top 8 Semantic Kernel alternatives that will help you build efficient AI agents.

In this CrewAI vs n8n, we explain the difference between the two and conclude which one is the best to build AI agents.

Discover the top 8 Langflow alternatives you can leverage to build and deploy AI agents.

In this Semantic Kernel vs Autogen article, we explain the differences between the two frameworks and conclude which one is best suited for building AI agents.

Discover the 7 best Agentic AI frameworks to help you build smarter AI workflows this year.

In this LlamaIndex pricing guide, we discuss the costs, features, and value LlamaIndex provides to help you decide if it’s the right investment for your business.

Compare the best CrewAI alternatives for building production AI workflows, including LangGraph, AutoGen, Google ADK, OpenAI Agents SDK, Pydantic AI, Langflow, Flowise, and LlamaIndex.

Discover the top 8 RAG tools for agentic AI you should try this year.

In this Crewai vs Autogen article, we explain the difference between the two and conclude which one is the best to build AI agents and applications.

In this CrewAI pricing guide, we discuss the costs, features, and value CrewAI provides to help you decide if it’s the right investment for your business.

In this Agentforce pricing guide, we discuss the costs, features, and value Agentforce provides to help you decide if it’s the right investment for your business.

Compare LangGraph vs n8n for building AI agents in 2025. Updated with LangGraph 1.0 stable release and n8n's new unlimited workflow pricing. Discover which framework fits your production AI stack.

Comprehensive analysis of why simple AI agent prototypes fail in production deployment, revealing the hidden complexities teams face when scaling from demos to enterprise-ready systems.

This Langflow vs LangGraph article explains all the differences between these AI agentic systems.

Lessons from the Maven Evals course are combined with 50+ real-world case studies from ZenML's LLMOps Database to show how companies like Discord, GitHub, and Coursera implement the Three Gulfs model and Analyze-Measure-Improve lifecycle to transform failing LLM systems into production-ready applications.

In this LangGraph vs Autogen article, we explain the difference between these platforms and when to use which one for the best results.

In this LlamaIndex vs LangGraph article, we explain the differences between these platforms and when to use each one for optimal results.

287 latest curated summaries of LLMOps use cases in industry, from tech to healthcare to finance and more. This blog also highlights some of the trends observed across the case studies.

ZenML's new DXT-packaged MCP server transforms MLOps workflows by enabling natural language conversations with ML pipelines, experiments, and infrastructure, reducing setup time from 15 minutes to 30 seconds and eliminating the need to hunt across multiple dashboards for answers.

Discover the top 7 LlamaIndex alternatives to build AI production agents with ease.

In this LangGraph vs CrewAI article, we explain the difference between the three platforms and educate you about using them efficiently inside ZenML.

In this LangGraph pricing guide, we discuss the costs, features, and value LangGraph provides to help you decide if it’s the right investment for your business.

Discover the top 8 LangGraph alternatives for scalable agent orchestration.

In this Metaflow vs MLflow vs ZenML article, we explain the difference between the three platforms and educate you about using them in tandem.

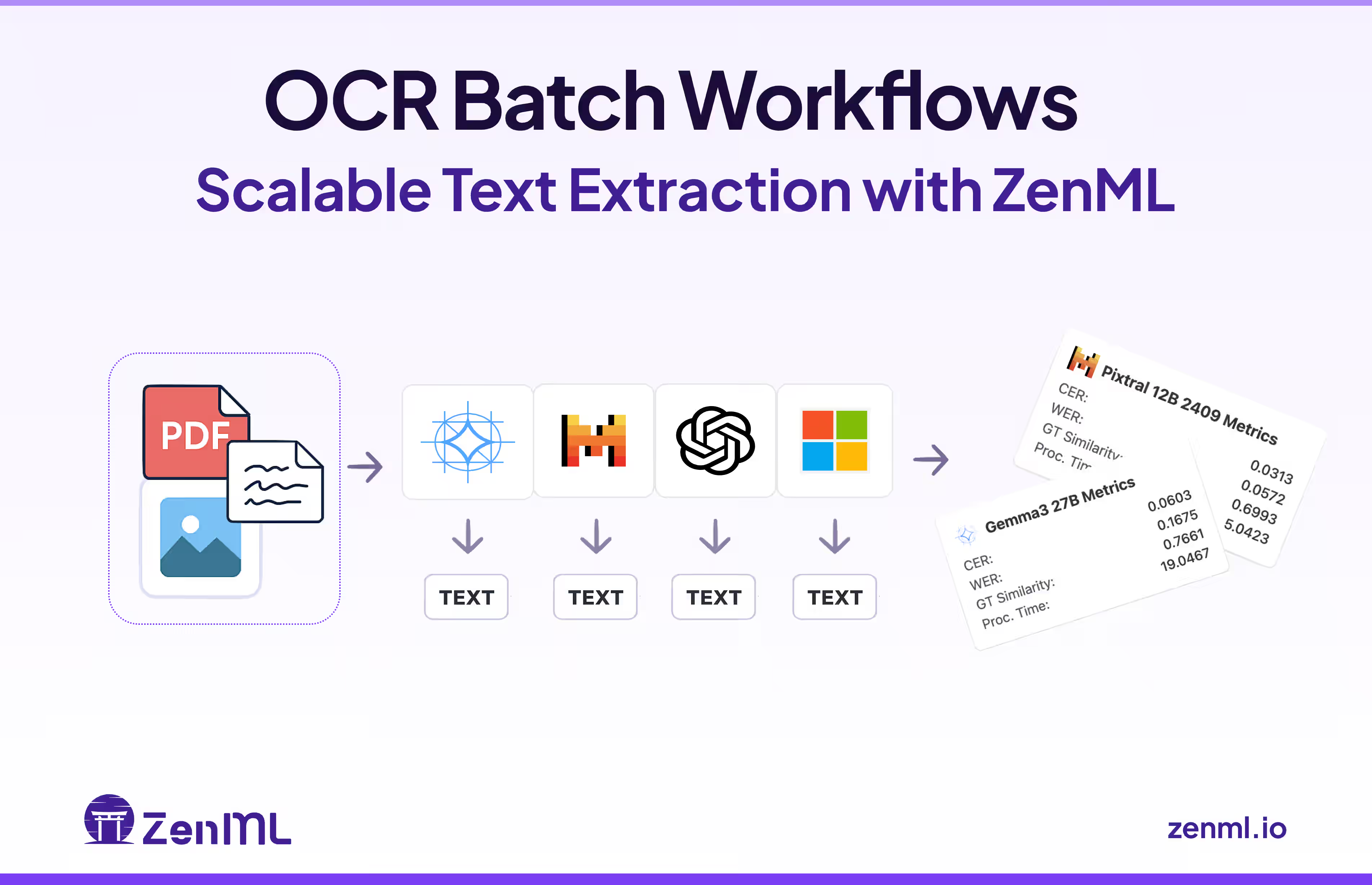

How do you reliably process thousands of diverse documents with GenAI OCR at scale? Explore why robust workflow orchestration is critical for achieving reliability in production. See how ZenML was used to build a scalable, multi-model batch processing system that maintains comprehensive visibility into accuracy metrics. Learn how this approach enables systematic benchmarking to select optimal OCR models for your specific document processing needs.

We explore how successful LLMOps implementation depends on human factors beyond just technical solutions. It addresses common challenges like misaligned executive expectations, siloed teams, and subject-matter expert resistance that often derail AI initiatives. The piece offers practical strategies for creating effective team structures (hub-and-spoke, horizontal teams, cross-functional squads), improving communication, and integrating domain experts early. With actionable insights from companies like TomTom, Uber, and Zalando, readers will learn how to balance technical excellence with organizational change management to unlock the full potential of generative AI deployments.

The OpenPipe integration in ZenML bridges the complexity of large language model fine-tuning, enabling enterprises to create tailored AI solutions with unprecedented ease and reproducibility.

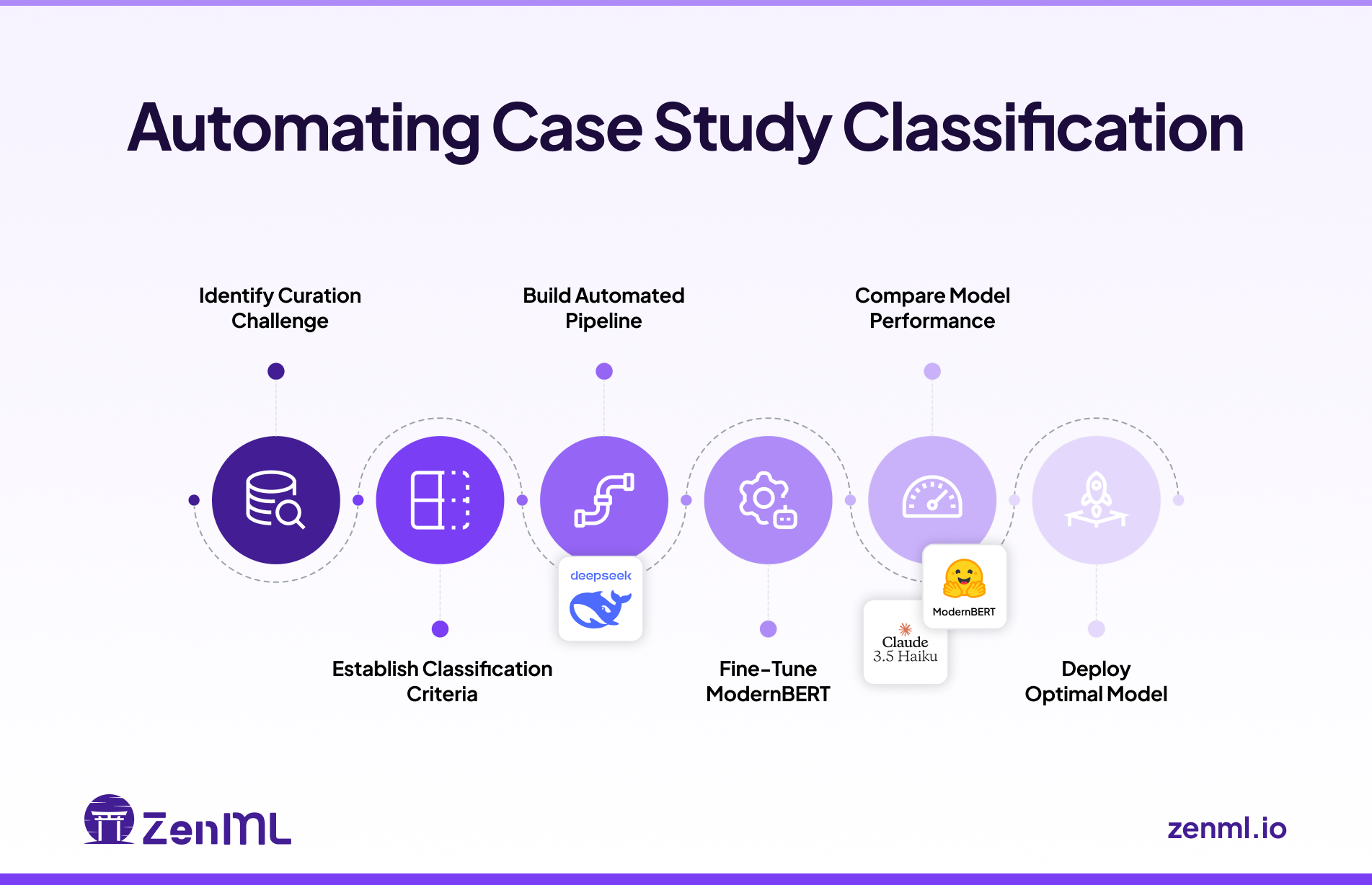

Can automated classification effectively distinguish real-world, production-grade LLM implementations from theoretical discussions? Follow my journey building a reliable LLMOps classification pipeline—moving from manual reviews, through prompt-engineered approaches, to fine-tuning ModernBERT. Discover practical insights, unexpected findings, and why a smaller fine-tuned model proved superior for fast, accurate, and scalable classification.

Are your query rewriting strategies silently hurting your Retrieval-Augmented Generation (RAG) system? Small but unnoticed query errors can quickly degrade user experience, accuracy, and trust. Learn how ZenML's automated evaluation pipelines can systematically detect, measure, and resolve these hidden issues—ensuring that your RAG implementations consistently provide relevant, trustworthy responses.

A comprehensive overview of lessons learned from the world's largest database of LLMOps case studies (457 entries as of January 2025), examining how companies implement and deploy LLMs in production. Through nine thematic blog posts covering everything from RAG implementations to security concerns, this article synthesizes key patterns and anti-patterns in production GenAI deployments, offering practical insights for technical teams building LLM-powered applications.

Learn how leading companies like Dropbox, NVIDIA, and Slack tackle LLM security in production. This comprehensive guide covers practical strategies for preventing prompt injection, securing RAG systems, and implementing multi-layered defenses, based on real-world case studies from the LLMOps database. Discover battle-tested approaches to input validation, data privacy, and monitoring for building secure AI applications.

This comprehensive guide explores strategies for optimizing Large Language Model (LLM) deployments in production environments, focusing on maximizing performance while minimizing costs. Drawing from real-world examples and the LLMOps database, it examines three key areas: model selection and optimization techniques like knowledge distillation and quantization, inference optimization through caching and hardware acceleration, and cost optimization strategies including prompt engineering and self-hosting decisions. The article provides practical insights for technical professionals looking to balance the power of LLMs with operational efficiency.

A comprehensive exploration of real-world lessons in LLM evaluation and quality assurance, examining how industry leaders tackle the challenges of assessing language models in production. Through diverse case studies, the post covers the transition from traditional ML evaluation, establishing clear metrics, combining automated and human evaluation strategies, and implementing continuous improvement cycles to ensure reliable LLM applications at scale.

Practical lessons on prompt engineering in production settings, drawn from real LLMOps case studies. It covers key aspects like designing structured prompts (demonstrated by Canva's incident review system), implementing iterative refinement processes (shown by Fiddler's documentation chatbot), optimizing prompts for scale and efficiency (exemplified by Assembled's test generation system), and building robust management infrastructure (as seen in Weights & Biases' versioning setup). Throughout these examples, the focus remains on systematic improvement through testing, human feedback, and error analysis, while balancing performance with operational costs and complexity.

An in-depth exploration of LLM agents in production environments, covering key architectures, practical challenges, and best practices. Drawing from real-world case studies in the LLMOps Database, this article examines the current state of AI agent deployment, infrastructure requirements, and critical considerations for organizations looking to implement these systems safely and effectively.

Discover how embeddings power modern search and recommendation systems with LLMs, using case studies from the LLMOps Database. From RAG systems to personalized recommendations, learn key strategies and best practices for building intelligent applications that truly understand user intent and deliver relevant results.

Explore real-world applications of Retrieval Augmented Generation (RAG) through case studies from leading companies in the ZenML LLMOps Database. Learn how RAG enhances LLM applications with external knowledge sources, examining implementation strategies, challenges, and best practices for building more accurate and informed AI systems.

The LLMOps Database offers a curated collection of 300+ real-world generative AI implementations, providing technical teams with practical insights into successful LLM deployments. This searchable resource includes detailed case studies, architectural decisions, and AI-generated summaries of technical presentations to help bridge the gap between demos and production systems.

Explore key insights and patterns from 300+ real-world LLM deployments, revealing how companies are successfully implementing AI in production. This comprehensive analysis covers agent architectures, deployment strategies, data infrastructure, and technical challenges, drawing from ZenML's LLMOps Database to highlight practical solutions in areas like RAG, fine-tuning, cost optimization, and evaluation frameworks.

As organizations rush to adopt generative AI, several major tech companies have proposed maturity models to guide this journey. While these frameworks offer useful vocabulary for discussing organizational progress, they should be viewed as descriptive rather than prescriptive guides. Rather than rigidly following these models, organizations are better served by focusing on solving real problems while maintaining strong engineering practices, building on proven DevOps and MLOps principles while adapting to the unique challenges of GenAI implementation.