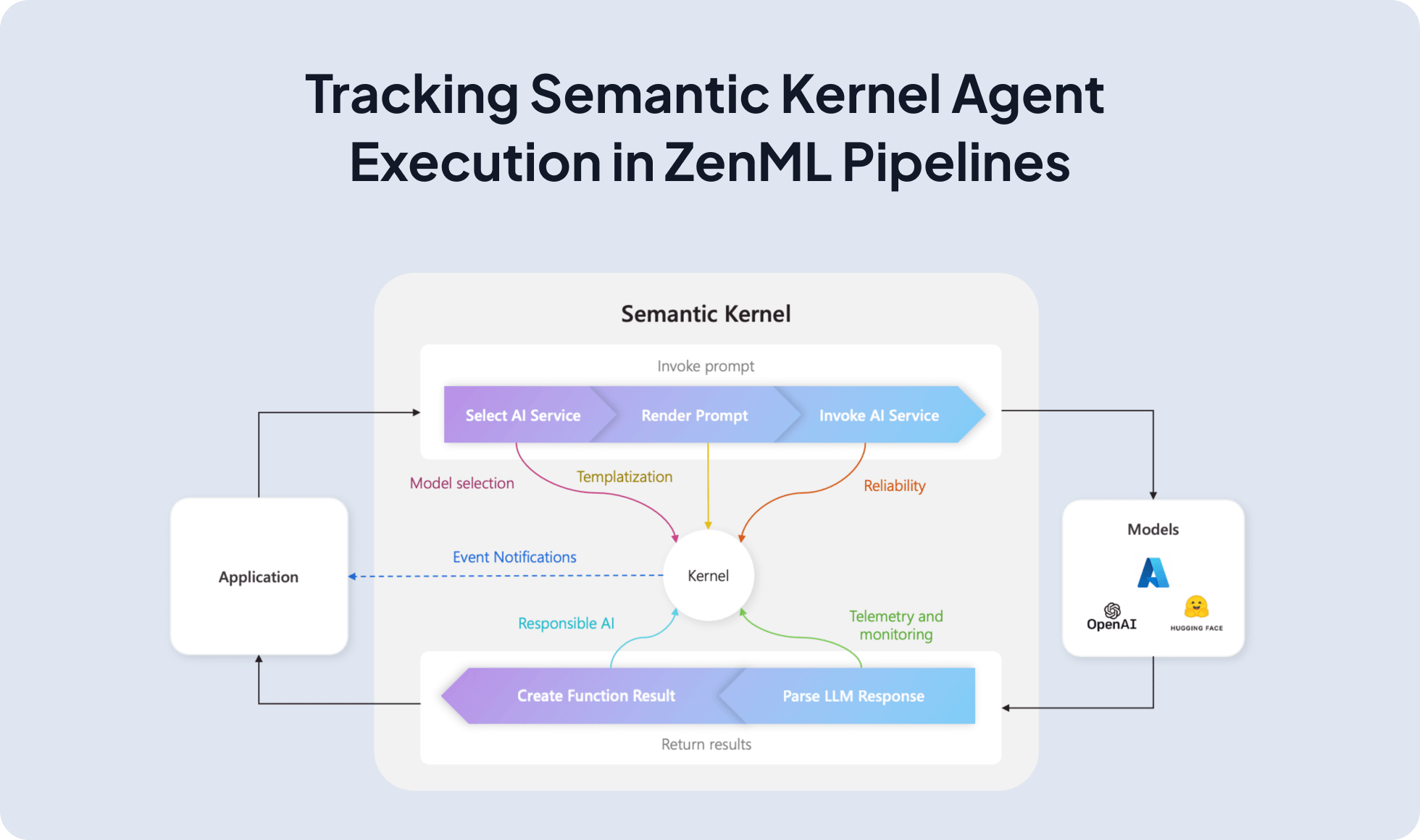

Semantic Kernel lets you build plugin-based agents using @kernel_function, auto function calling, and async chat with OpenAI; integrating it with ZenML runs those agents inside reproducible pipelines with artifact lineage, observability, and a smooth path from notebooks to production.

@kernel_function and compose them as plugins.from zenml import ExternalArtifact, pipeline, step

from semantic_kernel_agent import kernel

import asyncio

from semantic_kernel.connectors.ai.function_choice_behavior import FunctionChoiceBehavior

from semantic_kernel.connectors.ai.open_ai.prompt_execution_settings.open_ai_prompt_execution_settings import (

OpenAIChatPromptExecutionSettings,

)

from semantic_kernel.contents.chat_history import ChatHistory

@step

def run_semantic_kernel(query: str) -> str:

async def _run():

chat = kernel.get_service("openai-chat")

history = ChatHistory()

history.add_user_message(query)

settings = OpenAIChatPromptExecutionSettings()

settings.function_choice_behavior = FunctionChoiceBehavior.Auto()

resp = await chat.get_chat_message_content(

chat_history=history, settings=settings, kernel=kernel

)

return str(getattr(resp, "content", resp))

return asyncio.run(_run())

@pipeline

def semantic_kernel_agent_pipeline() -> str:

q = ExternalArtifact(value="What is the weather in Tokyo?")

return run_semantic_kernel(q.value)

if __name__ == "__main__":

print(semantic_kernel_agent_pipeline())Expand your ML pipelines with more than 50 ZenML Integrations