Don't make Claude do the same work twice

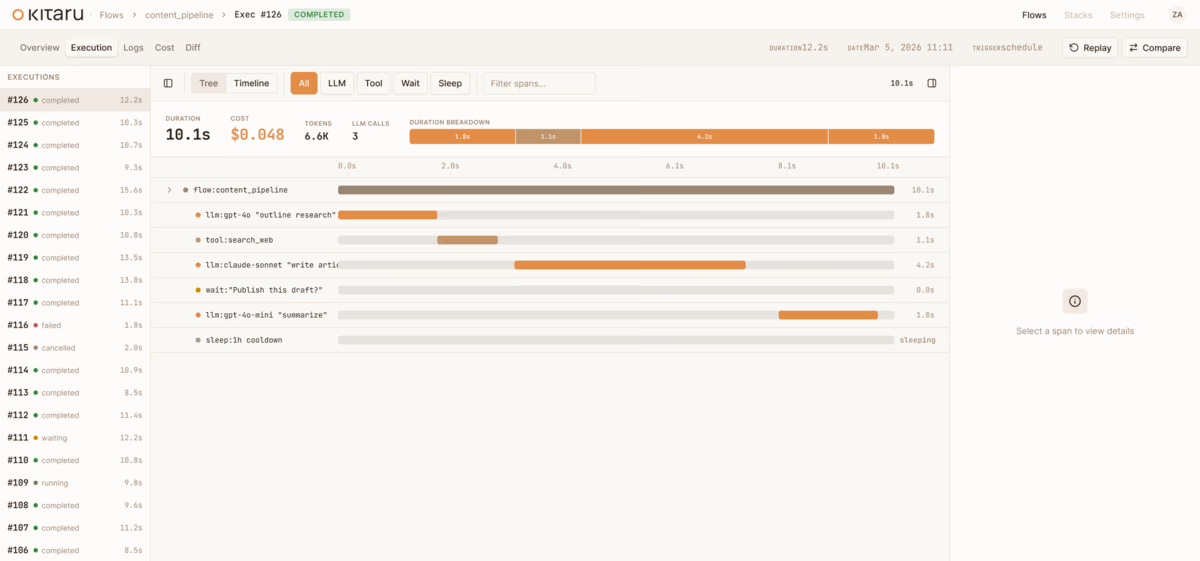

Claude Agent SDK runs the agent loop. Kitaru adds the durable runtime around a completed invocation — checkpointed results, artifacts, replay boundaries, and waits.

Claude Agent SDK runs the agent loop. Kitaru adds the durable runtime around a completed invocation — checkpointed results, artifacts, replay boundaries, and waits.

LangGraph keeps graph state, threads, and interrupts. Kitaru adds the durable workflow around the graph call — replay boundaries, durable waits, and inspectable runs.

The OpenAI Agents SDK stays the harness; Kitaru adds the runtime around it — durable workflow waits, replay boundaries, and inspectable execution history.

Armin Ronacher's Absurd and Kitaru arrived at the same answers on replay semantics, ephemeral compute, and an agent-legible runtime. Here's why that matters.

What people call the agent stack is really four layers: model, harness, runtime, platform. Conflating them costs durability. The runtime layer, and one split inside it, gets the least attention.

Meet Kitaru — open source durable execution for Python agents, built by the ZenML team. Crash recovery, human-in-the-loop, and replay from any checkpoint.

Kitaru is live: open-source infrastructure platform for running Python agents in production.

A production coding agent isn't a prompt and a while loop. It's eight stages, each with different failure modes, costs, and human touchpoints. Here's the full pattern.

ML pipelines were DAGs. Agents are loops. The orchestration layer that worked for training jobs doesn't work for autonomous systems, and the industry is scrambling to catch up.

We spent five years building ML pipeline infrastructure. Then agents showed up and we realized the next problem needed a new tool — not an extension of the old one.

Tracing shows you what went wrong. But what if you could go back, fix the input, and resume from where it failed — without re-running everything?

Every durable execution engine today forces your code to be deterministic. Kitaru takes a different approach — and it matters more than you think.